Google Gemini AI Model: Architecture, Benchmarks & Multimodal Capabilities Explained

Table of Contents

- Introduction: What Is Google Gemini?

- The Gemini Model Family: Ultra, Pro, and Nano

- Architecture and Training Infrastructure

- Multimodal Capabilities: Beyond Text Understanding

- Benchmark Performance: Gemini vs GPT-4

- On-Device AI: Gemini Nano for Edge Deployment

- Real-World Applications and Deployment

- Safety, Responsibility, and Post-Training

- Impact on the AI Landscape and Future Outlook

📌 Key Takeaways

- State-of-the-art performance: Gemini Ultra achieves SOTA on 30 of 32 benchmarks and is the first model to surpass human-expert performance on MMLU (90.0%).

- Native multimodality: Unlike GPT-4 which adds vision as a bolt-on, Gemini was trained from the ground up to process text, images, audio, video, and code together.

- Three model sizes: Ultra for complex reasoning, Pro for efficient production use, and Nano (1.8B/3.25B params) for on-device deployment.

- Massive training infrastructure: Trained on TPUv4 and TPUv5e clusters across multiple data centers using JAX and Pathways, with novel hot-swap fault tolerance.

- Broad deployment: Powers Google products including Bard (now Gemini), Google AI Studio, Cloud Vertex AI, and on-device features on Pixel phones.

Introduction: What Is Google Gemini?

In December 2023, Google DeepMind introduced Gemini — a family of highly capable multimodal models that represents one of the most ambitious AI projects ever undertaken. The original research paper describes a system that can natively understand and reason across text, images, audio, video, and code, all within a single unified architecture.

What sets Gemini apart from its predecessors — and from competitors like OpenAI's GPT-4 — is that multimodality wasn't an afterthought. While GPT-4V added image understanding as a separate capability, Gemini was designed from its very first training step to process multiple modalities simultaneously. This architectural decision yields profound advantages in cross-modal reasoning, where the model must combine information from images, text, and audio to solve complex problems.

The Gemini family consists of three size variants: Ultra, Pro, and Nano. Each targets a different use case — from the most complex multi-step reasoning tasks requiring the full power of Ultra, to lightweight on-device inference with Nano running directly on a smartphone. This strategic sizing reflects Google's understanding that AI deployment isn't one-size-fits-all; the edge, the cloud, and the research frontier all demand different trade-offs between capability and efficiency.

The results speak for themselves. Gemini Ultra achieved state-of-the-art performance on 30 of 32 benchmarks evaluated, and became the first model to surpass human-expert performance on MMLU — the Massive Multitask Language Understanding benchmark that tests knowledge across 57 subjects. This wasn't a narrow victory; it represented a clear step-function improvement in AI capabilities that sent shockwaves through the research community.

The Gemini Model Family: Ultra, Pro, and Nano

Google's decision to release Gemini in three sizes reflects a sophisticated understanding of the AI deployment landscape. Each model variant was engineered for a specific tier of computational requirements and use cases, creating an ecosystem rather than a single monolithic system.

Gemini Ultra: The Frontier Model

Gemini Ultra is the flagship — the most capable model in the family, designed to push the boundaries of what AI can achieve. While Google hasn't disclosed the exact parameter count (following the trend set by GPT-4 and PaLM 2 of keeping architectural details vague), the model clearly operates at massive scale. Ultra targets complex reasoning tasks that require synthesizing information across multiple modalities and long reasoning chains.

The Ultra model achieves its strongest results when combined with uncertainty-routed chain-of-thought (CoT) prompting. In this approach, the model generates multiple samples (typically 8 or 32), selects the majority vote when confidence exceeds a threshold, and falls back to greedy sampling without CoT otherwise. This technique, similar to chain-of-thought self-consistency, pushes MMLU performance to 90.0% — the first superhuman score on this benchmark.

Gemini Pro: The Production Workhorse

Gemini Pro occupies the middle tier, designed to be highly competitive while remaining dramatically more efficient to serve than Ultra. The Pro model delivered strong performance across text, reasoning, and multimodal benchmarks — making it the version most users initially accessed through Bard (later renamed to Gemini) and through Google's API offerings.

For most production applications, Pro represents the sweet spot. It maintains enough capability to handle complex queries while fitting within the cost and latency constraints that real-world applications demand. Google made Pro available through Vertex AI and Google AI Studio shortly after the announcement, enabling developers to build on the technology immediately.

Gemini Nano: AI on the Edge

Perhaps the most technically interesting variant, Gemini Nano demonstrates that frontier-class capabilities can be distilled into models small enough to run on a smartphone. Nano comes in two sizes: Nano-1 at 1.8 billion parameters and Nano-2 at 3.25 billion parameters. Both were created through distillation from larger Gemini models, preserving as much capability as possible within tight memory and compute constraints.

Nano-1 and Nano-2 were specifically designed for Google's Pixel 8 Pro, where they power features like Smart Reply in messaging apps and audio summarization. Running AI inference directly on-device eliminates the latency and privacy concerns of cloud-based processing — a critical advantage for consumer applications where users expect instant, private responses.

Discover how multimodal AI models are transforming document understanding and interaction.

Architecture and Training Infrastructure

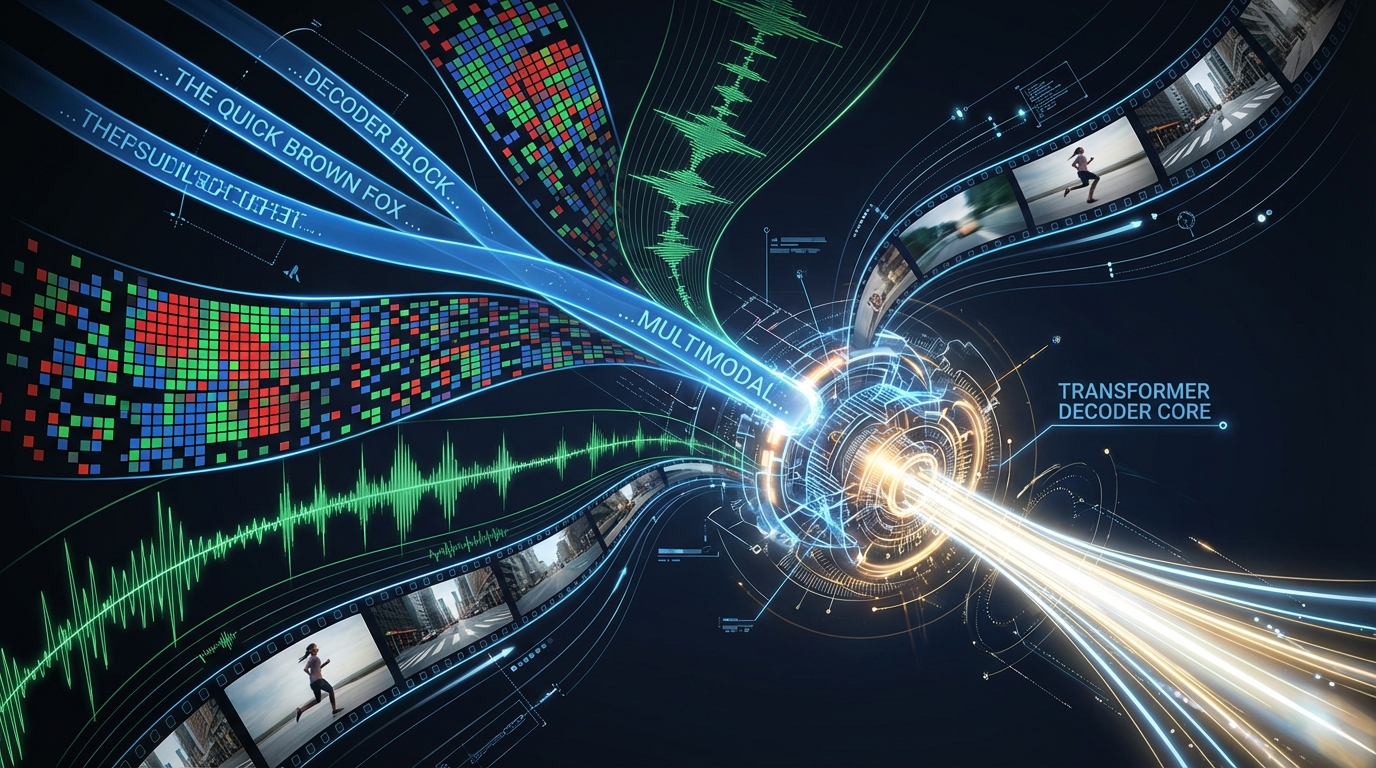

At its core, Gemini uses a transformer decoder architecture — the same foundational design that powers most modern large language models. However, Google applied significant architectural improvements and optimizations to achieve stable training at unprecedented scale and efficient inference on their custom TPU hardware.

Multimodal Input Processing

Gemini processes inputs across five modalities: text, images, audio, video, and code. Text is tokenized using SentencePiece, which the team found produces a higher-quality vocabulary when trained on a large portion of the dataset. Images are processed at various resolutions. Audio is accepted as features from Google's Universal Speech Model (USM) at a 16 kHz sampling rate. Video is encoded as a sequence of frames, fitting within the model's 32,768-token context window.

On the output side, Gemini can generate both text and images (using discrete pictorial tokens). This bidirectional multimodality places Gemini a level above competitors like GPT-4V, which at launch only accepted images as input alongside text but could not generate visual outputs.

TPU-Scale Training

The training infrastructure for Gemini represents an engineering marvel. Google trained the models on TPUv4 and TPUv5e accelerator clusters, with the scale significantly exceeding that of PaLM 2. For the Ultra model, Google employed an innovative approach: 4×4×4 cubes of TPU processors were maintained in each TPU Pod for hot swapping. Optical switches can reconfigure these cubes into any arbitrary 3D-torus topology in less than 10 seconds — a critical capability when training runs span weeks or months.

Training was distributed across multiple data centers, leveraging Google's high-bandwidth internal network. Model parallelism was used within super-pods, while data parallelism coordinated work between them. The training framework used JAX and Google's Pathways system, which enables efficient orchestration of computation across heterogeneous hardware.

Silent Data Corruption and Fault Tolerance

At the scale Gemini operates, new failure modes emerge that simply don't exist in smaller training runs. One of the most insidious is Silent Data Corruption (SDC) — where data quietly corrupts during computation without hardware-level detection. Google estimated this occurs approximately once every one to two weeks during Ultra training. A CPU might occasionally compute 1+1=3, and without explicit checks, this corruption propagates silently through the model.

To combat this, Google implemented a comprehensive set of measures to achieve determinism across the entire training architecture. The team described determinism as a "necessary ingredient for stable training at such a scale" — if you can't reproduce a training step exactly, you can't detect or recover from corruption.

Training Data and Tokenization

The training dataset is multimodal and multilingual, encompassing web data, books, code, images, audio, and video. While specific dataset details remain sparse, the team confirmed several important methodological choices. Token counts were determined following Chinchilla scaling recipes, which provide optimal compute-to-data ratios. For smaller models like Nano, significantly more tokens were used relative to model size to maximize inference-time quality. The team also employed curriculum-style training, adjusting the proportion of domain-specific datasets toward the end of training so that specialized data had greater influence during the critical final stages.

Multimodal Capabilities: Beyond Text Understanding

Gemini's multimodal capabilities represent a genuine paradigm shift from the "language model with vision bolted on" approach that had dominated the field. By training on interleaved sequences of text, images, audio, and video from the start, Gemini develops cross-modal representations that enable reasoning patterns impossible for text-only or vision-only models.

Image Understanding and Reasoning

Gemini demonstrates remarkable capabilities in visual reasoning. The model can examine complex diagrams, charts, and photographs, extracting not just surface-level descriptions but deep logical relationships. In the paper's examples, Gemini correctly interprets handwritten mathematical equations, identifies subtle patterns in scientific visualizations, and reasons about spatial relationships in architectural drawings.

On multimodal benchmarks, Gemini Ultra achieved state-of-the-art performance across all 20 benchmarks tested. On MMMU — the Multimodal Multitask Reasoning benchmark requiring deliberate reasoning across domains — Ultra scored 59.4%, substantially outperforming previous models.

Audio and Speech Processing

One of Gemini's standout capabilities is speech recognition. The model achieved higher accuracy than both Google's specialized Universal Speech Model (USM) and OpenAI's Whisper v2/v3 across multiple speech recognition datasets. This is a striking result: a single general-purpose multimodal model outperforming purpose-built speech systems. Even Nano-1, the smallest variant at 1.8B parameters, matched or exceeded specialized models of comparable size — suggesting that multimodal training transfers valuable representations even to compact models.

Video Understanding

Gemini processes video as a sequence of frames within its context window, enabling temporal reasoning about events, actions, and narrative progression. While the 32K context window limits the model to relatively short video clips, the quality of understanding within that window is impressive. The model can track objects across frames, understand cause-and-effect relationships in video sequences, and answer complex questions that require synthesizing visual and temporal information.

Cross-Modal Reasoning

Perhaps the most powerful capability is cross-modal reasoning — problems that require combining information from multiple modalities simultaneously. The research paper showcases examples where Gemini examines an image, reads text within it, performs mathematical calculations based on the visual data, and produces coherent textual explanations. Previous models could only achieve such feats by chaining together separate tools or plugins; Gemini does it natively within a single forward pass.

Want to see how AI transforms complex documents into engaging interactive experiences?

Benchmark Performance: Gemini vs GPT-4

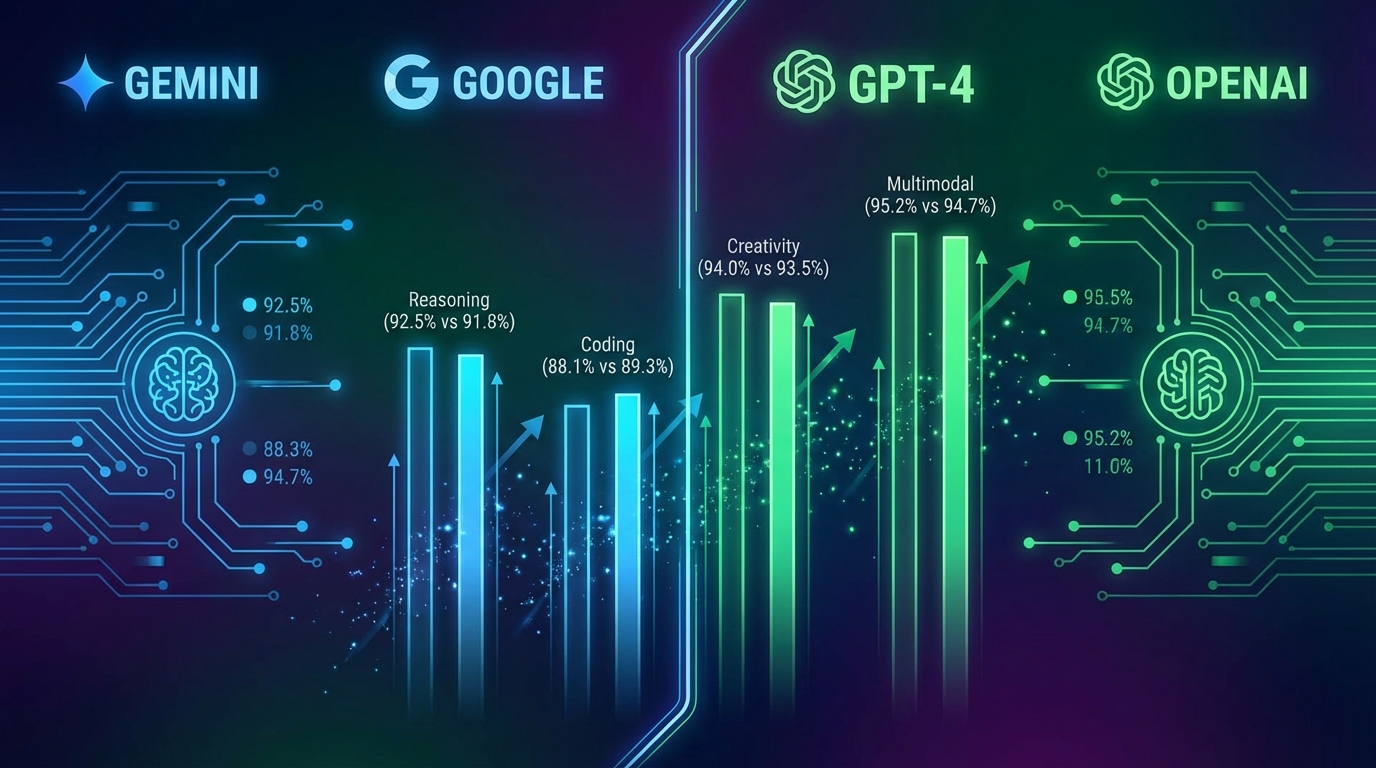

The benchmark comparisons between Gemini Ultra and GPT-4 tell a nuanced story — one that defies simple "winner takes all" narratives and reveals important methodological considerations that every AI practitioner should understand.

Text-Based Benchmarks

On MMLU, Gemini Ultra achieved 90.0% using uncertainty-routed chain-of-thought at 32 samples (CoT@32), compared to GPT-4's 86.4% with standard prompting. This made Gemini the first model to surpass the estimated human-expert threshold of ~89.8%. However, an important caveat: on pure greedy decoding (vanilla output without CoT orchestration), GPT-4 actually outperforms Gemini Ultra. The CoT@32 technique requires generating 32 separate responses and selecting the majority vote — a significant computational overhead that inflates both cost and latency.

| Benchmark | Gemini Ultra | GPT-4 | Winner |

|---|---|---|---|

| MMLU (CoT@32 / 5-shot) | 90.0% | 86.4% | Gemini |

| GSM8K (Math) | 94.4% | 92.0% | Gemini |

| MATH | 53.2% | 52.9% | ≈ Tie |

| HumanEval (Code) | 74.4% | 67.0% | Gemini |

| HellaSwag | 87.8% | 95.3% | GPT-4 |

| BIG-Bench Hard | 83.6% | 83.1% | ≈ Tie |

Multimodal Benchmarks

On multimodal benchmarks, Gemini Ultra's advantage over GPT-4V was more decisive. Across all 20 multimodal benchmarks evaluated, Gemini improved the state of the art — a remarkable sweep that reflects the model's superior multimodal training approach. On MMMU (59.4%), VQAv2, TextVQA, and DocVQA, Gemini Ultra set new records.

Machine Translation

An often-overlooked result is Gemini's machine translation performance. The predecessor PaLM 2 had already been shown to outperform production Google Translate, and Gemini Ultra extends this advantage further. For a general-purpose model to surpass a dedicated, heavily-optimized translation system speaks to the power of scale and multimodal pretraining.

Coding: AlphaCode 2

Built on Gemini Pro, AlphaCode 2 achieved the 87th percentile on competitive programming problems — up from the 46th percentile of the original AlphaCode. This leap demonstrates how Gemini's improved reasoning capabilities cascade through downstream applications, enabling more sophisticated code generation, debugging, and algorithmic problem-solving.

On-Device AI: Gemini Nano for Edge Deployment

While Ultra and Pro capture headlines, Gemini Nano may have the most transformative long-term impact. By bringing frontier-class AI capabilities directly to edge devices, Nano eliminates the three biggest barriers to AI adoption in consumer products: latency, privacy, and connectivity dependence.

Distillation from Larger Models

Nano-1 (1.8B parameters) and Nano-2 (3.25B parameters) were created through knowledge distillation from larger Gemini models. This process transfers the "knowledge" of a massive model into a compact one that can run within the memory and compute constraints of a mobile SoC. Critically, the distilled models were trained with many more tokens than the Chinchilla-optimal ratio — a technique that consistently improves quality at inference time for smaller models.

Performance at Scale

Despite their compact size, Nano models deliver impressive results. On factual knowledge, reasoning, and mathematical benchmarks, both Nano variants significantly outperformed other models of comparable size. Nano-2 in particular showed surprisingly strong performance on MMLU and common-sense reasoning tasks — demonstrating that careful distillation from a powerful teacher model can produce remarkably capable students.

On speech recognition, even Nano-1 matched or exceeded specialized models like Whisper, despite being a general-purpose model. This result challenges the conventional wisdom that specialized models always dominate their specific domains.

On-Device Deployment

Google deployed Nano on the Pixel 8 Pro, powering features like Smart Reply (intelligent message suggestions), audio summarization in the Recorder app, and contextual recommendations. Running inference on-device means responses are instant (no network round-trip), private (data never leaves the phone), and available offline.

Real-World Applications and Deployment

Google deployed the Gemini family across its product ecosystem with unprecedented speed, reflecting both the models' readiness and the company's urgency to compete with OpenAI's ChatGPT.

Bard → Gemini Consumer App

The most visible deployment was the rebranding of Bard — Google's conversational AI — to run on Gemini Pro, and later the launch of Gemini Advanced powered by Ultra. This gave hundreds of millions of users access to state-of-the-art multimodal AI capabilities through Google's consumer-facing interface.

Developer APIs

Through Google AI Studio and Cloud Vertex AI, developers gained programmatic access to Gemini Pro. The API supports multimodal inputs (text + images + audio), function calling, and structured output — enabling developers to build applications that leverage Gemini's cross-modal reasoning capabilities. The initial free tier on AI Studio helped drive rapid adoption among researchers and startups.

Google Workspace Integration

Gemini powers AI features across Google Workspace — Gmail, Docs, Sheets, Slides, and Meet. The model assists with email drafting, document summarization, data analysis in spreadsheets, presentation generation, and meeting transcription. These integrations reach billions of users, making Gemini one of the most widely deployed large language models in history.

Search and Advertising

Google Search's "AI Overviews" feature uses Gemini to generate comprehensive answers directly in search results. This application has enormous implications for the broader AI ecosystem — with potential to reshape how users discover and consume information on the web.

Transform your complex reports and documents into interactive experiences your audience will actually read.

Safety, Responsibility, and Post-Training

The Gemini paper dedicates significant attention to responsible deployment — reflecting the growing industry consensus that AI safety isn't optional but foundational. Google's approach encompasses both technical safeguards and governance frameworks.

Impact Assessment and Red-Teaming

Prior to deployment, Google conducted extensive impact assessments and red-teaming exercises. External experts were engaged to probe the model for harmful outputs, bias amplification, and potential misuse vectors. The team specifically tested for risks related to multimodal capabilities — such as the model being used to generate misleading image-text combinations or to extract sensitive information from documents.

Post-Training Alignment

Following pretraining, Gemini underwent extensive fine-tuning for alignment with human values and safety policies. This post-training phase includes reinforcement learning from human feedback (RLHF), constitutional AI techniques, and targeted training to reduce harmful outputs while maintaining helpfulness. The balance between safety and capability remains one of the most challenging problems in AI, and Google's approach reflects hard-won lessons from previous deployments.

Responsible Scaling

Google explicitly discusses the tension between scaling AI capabilities and ensuring responsible deployment. The company committed to ongoing monitoring, rapid response to discovered harms, and transparent reporting of model limitations. The deployment through controlled APIs (rather than open-sourcing the largest models) reflects a deliberate choice to maintain some degree of oversight over how frontier capabilities are used.

This approach contrasts with the open-source movement represented by models like DeepSeek-R1, which prioritize transparency and community access. Both approaches have merit, and the optimal balance between openness and control remains an active debate in the AI community.

Impact on the AI Landscape and Future Outlook

Gemini's release marked a pivotal moment in the AI race — the point at which Google demonstrated it could not only match but surpass OpenAI's GPT-4 on key benchmarks. The implications ripple across the industry, affecting competition, research directions, and the trajectory of AI development.

The Multimodal Paradigm

Gemini validated the hypothesis that natively multimodal training produces superior cross-modal understanding compared to bolting modalities onto a text model. This result has influenced the entire field: subsequent models from all major labs have moved toward more deeply integrated multimodal architectures. The era of "text LLM + separate vision encoder" is giving way to unified multimodal foundation models.

Scaling and Efficiency

The three-tier model strategy (Ultra/Pro/Nano) established a template that other labs have since adopted. The recognition that AI deployment requires models at multiple scales — from frontier research to edge devices — has become an industry standard. The success of Nano, in particular, demonstrated that distillation can transfer a remarkable fraction of large-model capabilities to compact models suitable for on-device deployment.

Competitive Dynamics

Gemini intensified the AI race between Google, OpenAI, Anthropic, and emerging competitors. The paper showed that the gap between Google and OpenAI had effectively closed, forcing all players to accelerate their development timelines. Subsequent releases — Gemini 1.5 with its million-token context window, Gemini 2.0 with agentic capabilities — have maintained this competitive pressure and pushed the frontier even further.

Implications for the Transformer Architecture

Despite the remarkable capabilities achieved, Gemini's architecture remains fundamentally a transformer decoder — the same core design introduced in the 2017 "Attention Is All You Need" paper. This continuity suggests that the transformer architecture still has substantial headroom for improvement through scaling, data curation, and training methodology innovation. The bottleneck isn't architecture; it's compute, data quality, and alignment.

Looking ahead, Gemini's trajectory — from 1.0 to 1.5 to 2.0 and beyond — suggests that Google DeepMind is committed to rapid iteration on the foundation established in this paper. With each release expanding context windows, adding agentic capabilities, and improving reasoning, the Gemini family continues to push the boundaries of what AI systems can achieve.

Frequently Asked Questions

What is Google Gemini AI?

Google Gemini is a family of multimodal AI models developed by Google DeepMind. Released in December 2023, Gemini comes in three sizes — Ultra, Pro, and Nano — and can natively process text, images, audio, video, and code. Gemini Ultra was the first AI model to surpass human-expert performance on the MMLU benchmark.

How does Gemini compare to GPT-4?

Gemini Ultra advances the state of the art in 30 of 32 benchmarks tested. While GPT-4 accepts only text and image inputs, Gemini natively processes audio, video, and code alongside text and images. On the MMLU benchmark, Gemini Ultra scores 90.0% compared to GPT-4's 86.4%, though GPT-4 outperforms on greedy decoding without chain-of-thought prompting.

What are the differences between Gemini Ultra, Pro, and Nano?

Gemini Ultra is the largest and most capable model designed for complex reasoning tasks. Gemini Pro is a mid-size model optimized for efficiency while remaining competitive. Gemini Nano comes in two variants — Nano-1 (1.8B parameters) and Nano-2 (3.25B parameters) — designed for on-device deployment on smartphones and edge hardware.

What multimodal capabilities does Gemini have?

Gemini can natively understand and reason across text, images, audio, video, and code in a single model. Unlike competitors that bolt on vision separately, Gemini was trained from the ground up as a multimodal system. It can process interleaved sequences of different modalities, perform cross-modal reasoning, and generate both text and image outputs.

What hardware was used to train Gemini?

Gemini was trained on Google's TPUv4 and TPUv5e accelerator clusters using JAX and the Pathways system. For the Ultra model, Google used 4×4×4 cubes of TPU processors per pod with hot-swapping capability. Training was distributed across multiple data centers using model parallelism within super-pods and data parallelism between them.