NIST AI Risk Management Framework (AI RMF 1.0): The Complete Guide

Table of Contents

- What Is the NIST AI Risk Management Framework?

- Why AI Risk Management Matters in 2026

- The Seven Characteristics of Trustworthy AI

- GOVERN: Building an AI Risk Culture

- MAP: Contextualizing AI Risks

- MEASURE: Quantifying and Analyzing AI Risks

- MANAGE: Treating and Mitigating AI Risks

- Practical Implementation: Profiles and Use Cases

- NIST AI RMF and Global Regulatory Alignment

- Getting Started: A Step-by-Step Roadmap

📌 Key Takeaways

- Voluntary but essential: The NIST AI RMF provides a structured, non-mandatory approach to AI risk management that is rapidly becoming the industry standard for responsible AI deployment.

- Four core functions: Govern, Map, Measure, and Manage form an iterative cycle — with Govern serving as the cross-cutting foundation that infuses risk culture across all activities.

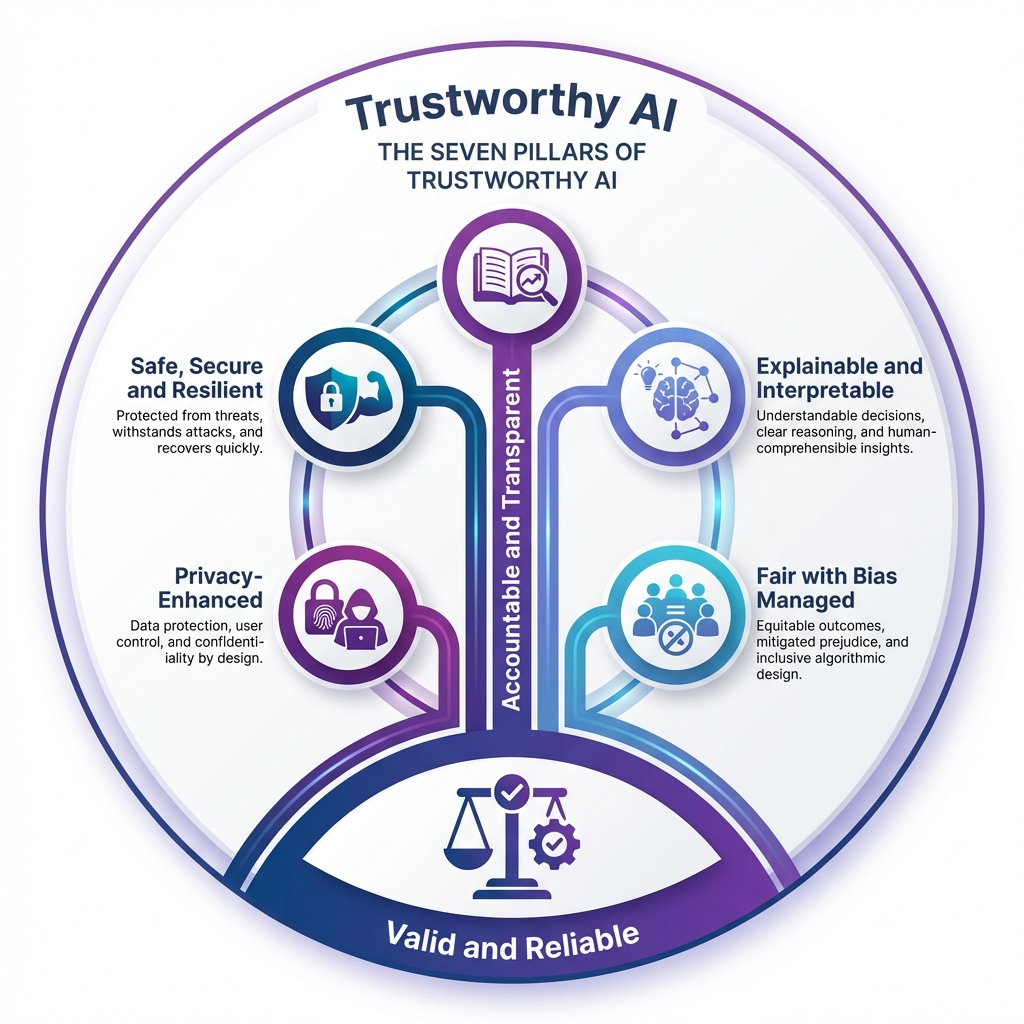

- Seven trustworthiness pillars: Valid & Reliable, Safe, Secure & Resilient, Accountable & Transparent, Explainable & Interpretable, Privacy-Enhanced, and Fair with Harmful Bias Managed.

- Risk-based, not rules-based: Organizations calibrate their approach based on risk tolerance, context, and potential impacts — avoiding one-size-fits-all compliance.

- Global alignment: The framework complements the EU AI Act, ISO/IEC standards, and OECD AI Principles, positioning organizations for cross-border regulatory readiness.

What Is the NIST AI Risk Management Framework?

The NIST AI Risk Management Framework (AI RMF 1.0) is a comprehensive guidance document published by the National Institute of Standards and Technology in January 2023. Mandated by the National Artificial Intelligence Initiative Act of 2020, it provides organizations with a voluntary, flexible, and structured approach to identifying, assessing, and mitigating risks associated with artificial intelligence systems throughout their entire lifecycle.

Unlike prescriptive regulatory mandates, the AI risk management framework is designed to be use-case agnostic and non-sector-specific. Whether you're deploying a medical imaging classifier, a financial fraud detection system, or an autonomous vehicle navigation model, the framework offers a common language and structured process for thinking about AI risks. Its flexibility makes it applicable to organizations of all sizes — from startups building their first ML model to multinational enterprises managing hundreds of AI systems.

The framework is structured in two parts. Part 1 establishes foundational concepts: how AI risks differ from traditional software risks, who the relevant stakeholders (or "AI actors") are, and what characteristics define trustworthy AI. Part 2 introduces the operational core — four interconnected functions (Govern, Map, Measure, Manage) broken into categories and subcategories that organizations can tailor to their specific needs through customizable "profiles."

"AI systems are designed to operate with varying levels of autonomy. AI risk management offers approaches to minimize potential negative impacts of AI systems, such as threats to civil liberties and rights of individuals." — NIST AI RMF 1.0

Why AI Risk Management Matters in 2026

The urgency for structured AI risk management has never been greater. As AI systems become deeply embedded in critical infrastructure — from healthcare diagnostics to financial markets to government services — the consequences of unmanaged risks scale proportionally. The Stanford AI Index Report 2025 documented a 67% increase in reported AI incidents over two years, underscoring that deployment is outpacing governance.

Several factors make the NIST AI RMF particularly relevant today:

- Regulatory convergence: The EU AI Act entered into force in 2024, and organizations need practical frameworks to operationalize compliance. The NIST AI RMF provides exactly that bridge between regulatory requirements and implementation.

- Enterprise adoption acceleration: According to McKinsey, over 72% of organizations now use AI in at least one business function. Yet fewer than 30% have formal AI risk management processes — a gap that exposes them to reputational, legal, and operational risks.

- Model complexity growth: Foundation models and generative AI systems like GPT-4 introduce emergent capabilities and risks that traditional software testing cannot adequately address. The AI RMF's lifecycle approach is specifically designed for these challenges.

- Third-party risk: Most organizations use AI through third-party APIs and pre-trained models, creating supply chain risks that require structured governance — precisely what the GOVERN function addresses.

The framework positions AI risk management not as a compliance burden but as a competitive advantage. Organizations that systematically manage AI risks build greater stakeholder trust, avoid costly failures, and create more resilient AI systems that perform reliably in production environments.

The Seven Characteristics of Trustworthy AI

At the philosophical heart of the NIST AI risk management framework lies a vision of trustworthy AI — systems that stakeholders can rely upon to function as intended while minimizing harms. NIST defines seven interconnected characteristics that, together, form the foundation of AI trustworthiness. Crucially, trustworthiness is "only as strong as its weakest characteristic."

1. Valid and Reliable

Validation confirms that an AI system fulfills the requirements of its intended use. Reliability ensures it performs consistently over time without failure. This characteristic encompasses accuracy (closeness to true values), robustness (maintaining performance under varied conditions), and generalizability (functioning in settings beyond the training distribution). Valid and reliable forms the base upon which all other characteristics depend.

2. Safe

AI systems should not, under defined conditions, lead to states where human life, health, property, or the environment is endangered. Safety is achieved through responsible design practices, rigorous simulation testing, real-time monitoring, and maintaining the ability to shut down or modify systems when hazards emerge. Safety risks posing serious injury or death receive the most urgent prioritization.

3. Secure and Resilient

Security maintains confidentiality, integrity, and availability through protection mechanisms against threats like adversarial examples, data poisoning, and model exfiltration. Resilience extends this to include the ability to withstand unexpected adverse events and degrade gracefully rather than catastrophically. The NIST Cybersecurity Framework (CSF 2.0) provides complementary guidance for this characteristic.

4. Accountable and Transparent

Accountability requires that organizations and individuals take responsibility for AI system outcomes. Transparency provides the information necessary for stakeholders to understand what the system does, how it was developed, and how decisions are made. As NIST emphasizes, accountability presupposes transparency — you cannot hold parties accountable for outcomes they cannot observe or understand.

5. Explainable and Interpretable

Explainability describes how an AI system produces its outputs, while interpretability addresses why those outputs are meaningful in context. Together, they enable operators, overseers, and users to gain deeper insights into functionality and trustworthiness. Explanations should be tailored to the audience — technical teams need different information than end users or regulators.

6. Privacy-Enhanced

Privacy encompasses the norms and practices protecting human autonomy, identity, and dignity. AI systems can create novel privacy risks through inference capabilities — deriving sensitive personal information from seemingly innocuous data. Privacy-enhancing technologies (PETs), data minimization, de-identification, and aggregation techniques all support this characteristic, though organizations must carefully manage tradeoffs between privacy protections and model accuracy.

7. Fair — with Harmful Bias Managed

Fairness addresses equality and equity, focusing on managing harmful biases that could lead to discriminatory outcomes. NIST identifies three categories of AI bias: systemic bias (embedded in societal structures and datasets), computational bias (stemming from non-representative samples or algorithmic processes), and human-cognitive bias (how humans perceive and act on AI outputs). Each can occur without discriminatory intent but can be amplified by AI's speed and scale.

Transform complex AI governance documents into interactive experiences your team will actually engage with.

GOVERN: Building an AI Risk Culture

The GOVERN function is the cross-cutting foundation of the entire AI risk management framework. While Map, Measure, and Manage address specific system-level activities, Govern establishes the organizational culture, policies, processes, and accountability structures that make effective risk management possible. It infuses throughout all other functions.

GOVERN encompasses six core categories:

GOVERN 1 — Policies and Procedures: Organizations establish transparent, documented policies for AI risk mapping, measurement, and management. This includes understanding legal and regulatory requirements (GOVERN 1.1), integrating trustworthiness characteristics into organizational practices (GOVERN 1.2), determining appropriate risk management levels based on risk tolerance (GOVERN 1.3), and establishing ongoing monitoring processes with clearly defined roles and review frequencies (GOVERN 1.5).

GOVERN 2 — Accountability Structures: Clear roles, responsibilities, and communication lines are documented (GOVERN 2.1). Personnel receive AI risk management training (GOVERN 2.2), and executive leadership explicitly takes responsibility for AI deployment decisions (GOVERN 2.3). This prevents the diffusion of responsibility that often leads to governance failures.

GOVERN 3 — Diversity, Equity, Inclusion, and Accessibility: Decision-making is informed by demographically and disciplinarily diverse teams (GOVERN 3.1). Policies define and differentiate roles for human-AI configurations and oversight (GOVERN 3.2). Diversity in governance teams directly reduces blind spots in risk identification.

GOVERN 4 — Risk Communication Culture: Organizations foster critical thinking and safety-first mindsets (GOVERN 4.1), document and broadly communicate risks and potential impacts (GOVERN 4.2), and enable testing, incident identification, and information sharing without fear of retaliation (GOVERN 4.3).

GOVERN 5 — Stakeholder Engagement: Policies enable collecting, prioritizing, and integrating external feedback regarding AI impacts (GOVERN 5.1), with mechanisms for regularly incorporating adjudicated feedback into system design (GOVERN 5.2).

GOVERN 6 — Third-Party and Supply Chain Risk: Policies address risks from third-party software, data, and AI components (GOVERN 6.1), including intellectual property considerations — essential as most organizations increasingly rely on pre-trained models and external AI services.

MAP: Contextualizing AI Risks

The MAP function establishes the context for AI risk management by identifying risks, understanding their potential impacts, and categorizing them. Mapping is foundational because effective measurement and management depend on a complete picture of what risks exist and whom they affect.

MAP activities include:

- Intended use and context (MAP 1): Defining the purpose, operational context, and assumptions underlying AI system deployment. This includes identifying potential misuse scenarios and edge cases that designers may not have anticipated.

- Stakeholder identification (MAP 2): Identifying all parties who may be affected by the AI system — not just direct users, but also individuals and communities who may be impacted without interacting with the system. The NIST AI RMF explicitly distinguishes between "end users" and "affected individuals/communities."

- Benefit and risk enumeration (MAP 3): Systematically cataloging both the potential benefits and harms of the AI system. Benefits are considered alongside risks to enable proportionate risk management — some risks may be acceptable given sufficient societal benefit.

- Impact assessment (MAP 4-5): Evaluating risks across multiple dimensions including individual rights, group equity, societal welfare, environmental impact, and organizational reputation. Impact assessments should be conducted iteratively throughout the AI lifecycle, not just at deployment.

A critical insight from the MAP function is that risks can emerge at any lifecycle stage. An AI system that appears safe during development may exhibit harmful behavior when deployed in contexts that differ from training conditions. Mapping must therefore be an ongoing activity, not a one-time assessment.

MEASURE: Quantifying and Analyzing AI Risks

The MEASURE function uses quantitative and qualitative methods to analyze, assess, benchmark, and monitor AI risks and their impacts. It transforms the risks identified in the MAP phase into actionable data that informs management decisions.

Key measurement activities include:

Metrics and benchmarks: Establishing appropriate metrics for each trustworthiness characteristic — accuracy rates, fairness indicators, robustness scores, privacy leakage measurements, and explainability assessments. NIST acknowledges a significant challenge here: the lack of consensus on robust, universally applicable AI risk metrics. Metrics can be oversimplified, gamed, or lack the nuance needed for meaningful assessment.

Testing, Evaluation, Verification, and Validation (TEVV): The framework emphasizes that TEVV activities should be conducted by parties independent from those who designed or built the AI system. This separation reduces conflicts of interest and provides more objective risk assessments. TEVV spans the full lifecycle — from data quality audits through production monitoring.

Continuous monitoring: AI systems are not static. Data distributions shift, user behaviors evolve, and operational contexts change. The MEASURE function calls for ongoing monitoring against established baselines, with triggers for re-assessment when performance degrades or new risks emerge.

NIST identifies several measurement challenges that organizations should anticipate:

| Challenge | Description |

|---|---|

| Third-party alignment | Risk metrics may not align between developing and deploying organizations |

| Emergent risks | New risk categories can appear post-deployment in unexpected ways |

| Lab vs. real-world gap | Laboratory measurements often differ from operational realities |

| Inscrutability | Complex models resist straightforward risk measurement |

| Human baseline comparison | Difficult to systematize comparison of AI vs. human performance |

Make AI governance documentation accessible and engaging for every stakeholder in your organization.

MANAGE: Treating and Mitigating AI Risks

The MANAGE function allocates risk management resources to mapped and measured risks based on organizational priorities. It encompasses the practical decisions about how to respond to identified risks: accept, mitigate, transfer, or avoid them.

MANAGE activities include:

- Risk response and treatment: Selecting and implementing appropriate risk responses. For high-severity risks (potential serious injury or death), the framework explicitly states that development or deployment should cease until risks are adequately addressed. For lower-severity risks, organizations calibrate responses based on risk tolerance.

- Resource allocation: Directing organizational resources — budget, personnel, technology, time — to the highest-priority risks identified through the MEASURE function. This prevents the common pitfall of spreading risk management resources too thin.

- Incident response: Establishing processes for rapid response when AI risks materialize in production. This includes defined escalation paths, communication protocols, and the ability to quickly modify, retrain, or shut down AI systems.

- Documentation and communication: Recording all risk management decisions, rationales, and outcomes. This documentation serves both accountability and organizational learning — enabling teams to improve risk management practices over time.

A key MANAGE principle is proportionality. The framework recognizes that attempting to eliminate all risk is counterproductive. Instead, organizations should focus their most intensive risk management efforts on AI systems that directly affect human safety, rights, or welfare, while applying lighter-touch governance to lower-risk applications.

Practical Implementation: Profiles and Use Cases

The NIST AI RMF introduces the concept of profiles — customized implementations of the framework tailored to specific organizational contexts, sectors, or use cases. Profiles allow organizations to select which categories and subcategories are most relevant to their situation and prioritize accordingly.

A profile might address:

- Sector-specific risks: Healthcare AI systems face different risks than financial services models. A healthcare profile would emphasize safety, validity, and bias management, while a financial services profile might prioritize security, fairness, and regulatory compliance.

- Risk tier mapping: Organizations can create tiered profiles — rigorous governance for high-risk systems (e.g., autonomous decision-making affecting individuals) and streamlined processes for lower-risk applications (e.g., internal analytics dashboards).

- Maturity levels: Organizations early in their AI governance journey can use profiles to identify priority areas for immediate focus, then progressively expand their risk management practices as capabilities mature.

The framework also defines comprehensive AI actor categories — from AI designers and developers to deployment operators, TEVV professionals, procurement teams, and affected communities. Each actor has specific responsibilities within the risk management process, creating a web of accountability that extends beyond the technical team to include leadership, legal, compliance, and external stakeholders.

For practical implementation, NIST provides the AI RMF Playbook — a companion resource with suggested actions, references, and implementation guidance for each subcategory. The Playbook is updated more frequently than the framework itself, incorporating lessons learned and emerging best practices from the AI community.

NIST AI RMF and Global Regulatory Alignment

One of the AI risk management framework's greatest strengths is its alignment with global regulatory and standards initiatives. Organizations operating across jurisdictions can use the NIST AI RMF as a practical foundation that maps to multiple regulatory requirements:

EU AI Act alignment: The EU AI Act's risk-based classification system and requirements for high-risk AI systems closely parallel the NIST AI RMF's approach. Organizations that implement the framework's GOVERN and MAP functions are well-positioned for EU AI Act conformity assessments.

ISO/IEC standards: The NIST AI RMF draws extensively from ISO/IEC standards, including ISO 31000:2018 (risk management), ISO/IEC 22989:2022 (AI concepts), and ISO/IEC TS 5723:2022 (trustworthiness). Organizations pursuing ISO certification will find significant overlap with their AI RMF implementation.

OECD AI Principles: The framework's definition of AI systems and its emphasis on human-centric values directly adopt and extend the OECD Recommendation on AI (2019). All OECD member nations have endorsed these principles, making the NIST AI RMF a practical implementation guide for a globally recognized set of AI governance norms.

This cross-framework compatibility means that an investment in NIST AI RMF implementation generates returns across multiple compliance domains — a significant advantage for organizations navigating an increasingly complex global AI regulatory landscape.

Turn regulatory frameworks into interactive training materials your compliance team will actually use.

Getting Started: A Step-by-Step Roadmap

Implementing the NIST AI risk management framework may seem daunting, but the framework is designed for incremental adoption. Here is a practical roadmap for organizations at any maturity level:

Phase 1: Foundation (Weeks 1-4)

- Establish executive sponsorship and assign an AI risk management lead

- Inventory existing AI systems and classify by risk level

- Review the NIST AI RMF Playbook and identify priority subcategories

- Define organizational risk tolerance for AI systems

Phase 2: Governance (Weeks 4-8)

- Draft AI risk management policies aligned with GOVERN categories

- Establish roles, responsibilities, and accountability structures

- Create or update AI ethics and responsible use guidelines

- Implement training programs for AI practitioners and leadership

Phase 3: Assessment (Weeks 8-16)

- Conduct MAP activities for highest-risk AI systems first

- Establish MEASURE baselines and metrics for each trustworthiness characteristic

- Perform initial risk assessments using the framework's categories

- Document findings and create risk treatment plans

Phase 4: Operationalization (Ongoing)

- Implement MANAGE processes including incident response and escalation

- Establish continuous monitoring and periodic review cycles

- Build feedback loops with stakeholders and affected communities

- Iterate and improve based on lessons learned and framework updates

The NIST AI RMF is a living document, with formal community review planned no later than 2028. Organizations should establish processes not just for current compliance but for adapting to framework evolution — ensuring their AI governance practices remain aligned with the latest standards and best practices.

As AI systems become increasingly central to business operations and societal infrastructure, structured risk management transitions from "nice to have" to organizational imperative. The NIST AI Risk Management Framework provides the most comprehensive, flexible, and internationally aligned approach available today. Organizations that adopt it proactively will be better positioned — not just for regulatory compliance, but for building AI systems that genuinely earn stakeholder trust.

Frequently Asked Questions

What is the NIST AI Risk Management Framework?

The NIST AI Risk Management Framework (AI RMF 1.0) is a voluntary guidance document published by the National Institute of Standards and Technology to help organizations design, develop, deploy, and use AI systems responsibly. It provides a structured approach through four core functions — Govern, Map, Measure, and Manage — to identify, assess, and mitigate AI-related risks while promoting trustworthy AI.

What are the four core functions of the NIST AI RMF?

The four core functions are: GOVERN (establishing organizational policies and culture for AI risk management), MAP (contextualizing AI risks and identifying potential impacts), MEASURE (analyzing and quantifying identified risks using metrics and benchmarks), and MANAGE (allocating resources to treat, mitigate, or accept risks based on priorities). GOVERN is cross-cutting and applies across all other functions.

Is the NIST AI Risk Management Framework mandatory?

No, the NIST AI RMF is voluntary and not legally mandated. However, it is increasingly referenced in regulatory discussions and industry best practices. Organizations adopt it to demonstrate due diligence in AI governance, align with emerging regulations like the EU AI Act, and build stakeholder trust through structured risk management processes.

What are the seven trustworthiness characteristics in the NIST AI RMF?

The seven characteristics of trustworthy AI defined in the NIST AI RMF are: (1) Valid and Reliable, (2) Safe, (3) Secure and Resilient, (4) Accountable and Transparent, (5) Explainable and Interpretable, (6) Privacy-Enhanced, and (7) Fair with Harmful Bias Managed. These characteristics are interconnected, and trustworthiness is only as strong as the weakest characteristic.

How does the NIST AI RMF relate to the EU AI Act?

While the NIST AI RMF is a voluntary U.S. framework and the EU AI Act is binding legislation, they share complementary goals. Both emphasize risk-based approaches, transparency, accountability, and human oversight. Organizations operating globally can use the NIST AI RMF as a practical implementation guide that aligns with many EU AI Act requirements, particularly for high-risk AI system assessments and governance structures.