Agentic AI: A Comprehensive Survey of Architectures, Applications & Future Directions

Table of Contents

- What Is Agentic AI? Defining the New Paradigm

- The Dual-Paradigm Framework: Symbolic vs. Neural Agentic AI

- Historical Evolution: From Expert Systems to Agentic AI Agents

- Agentic AI Architectures: LangChain, AutoGen, and CrewAI

- Multi-Agent Systems: Orchestration and Collaboration Patterns

- Agentic AI in Healthcare: Safety-Critical Applications

- Agentic AI in Finance: Adaptive Intelligence for Data-Rich Markets

- AI Governance and Ethics: Paradigm-Specific Challenges

- The Neuro-Symbolic Future: Hybrid Agentic AI Systems

- Research Gaps and Critical Directions for Agentic AI

- Implications for Enterprise AI Strategy

🔑 Key Takeaways

- What Is Agentic AI? Defining the New Paradigm — Agentic AI represents a fundamental shift in artificial intelligence—from passive, task-specific tools toward autonomous systems that exhibit genuine agency.

- The Dual-Paradigm Framework: Symbolic vs. Neural Agentic AI — The most significant contribution of this agentic AI survey is its dual-paradigm taxonomy, which categorizes agentic systems into two distinct architectural lineages.

- Historical Evolution: From Expert Systems to Agentic AI Agents — The survey maps agentic AI’s evolution through five distinct but overlapping eras, providing essential context for understanding why the dual-paradigm framework matters.

- Agentic AI Architectures: LangChain, AutoGen, and CrewAI — The architectural landscape of modern agentic AI is dominated by neural-paradigm frameworks that achieve agency through fundamentally different mechanisms than their symbolic predecessors.

- Multi-Agent Systems: Orchestration and Collaboration Patterns — Multi-agent systems (MAS) represent the most sophisticated expression of agentic AI, and the survey identifies several critical orchestration patterns that determine system behavior and effectiveness.

What Is Agentic AI? Defining the New Paradigm

Agentic AI represents a fundamental shift in artificial intelligence—from passive, task-specific tools toward autonomous systems that exhibit genuine agency. Unlike conventional AI models that respond to individual prompts in isolation, agentic AI systems are defined by their capacity for proactive planning, contextual memory, sophisticated tool use, and the ability to adapt behavior based on environmental feedback. These systems operate not as mere solvers but as collaborative partners capable of dynamically perceiving complex environments, reasoning about abstract goals, and orchestrating sequences of actions.

The distinction between a single AI agent and agentic AI as a field is crucial for understanding this survey’s scope. An AI agent is a self-contained autonomous system designed to accomplish a goal independently—for example, a powerful LLM-based agent tasked with writing a complete project proposal would autonomously break down the task, conduct research, draft sections, and format the final document. Agentic AI, by contrast, is the broader architectural approach concerned with creating systems that exhibit agency, frequently through multi-agent systems (MAS) where specialized agents coordinate to solve problems too complex for any single agent.

This comprehensive survey, based on a PRISMA-based review of 90 studies published between 2018 and 2025, introduces a novel dual-paradigm framework that resolves a critical confusion in the field: the tendency to conflate modern neural systems with outdated symbolic models, a practice the authors term “conceptual retrofitting.” The framework provides essential clarity for researchers, practitioners, and organizations seeking to understand and deploy agentic AI systems effectively.

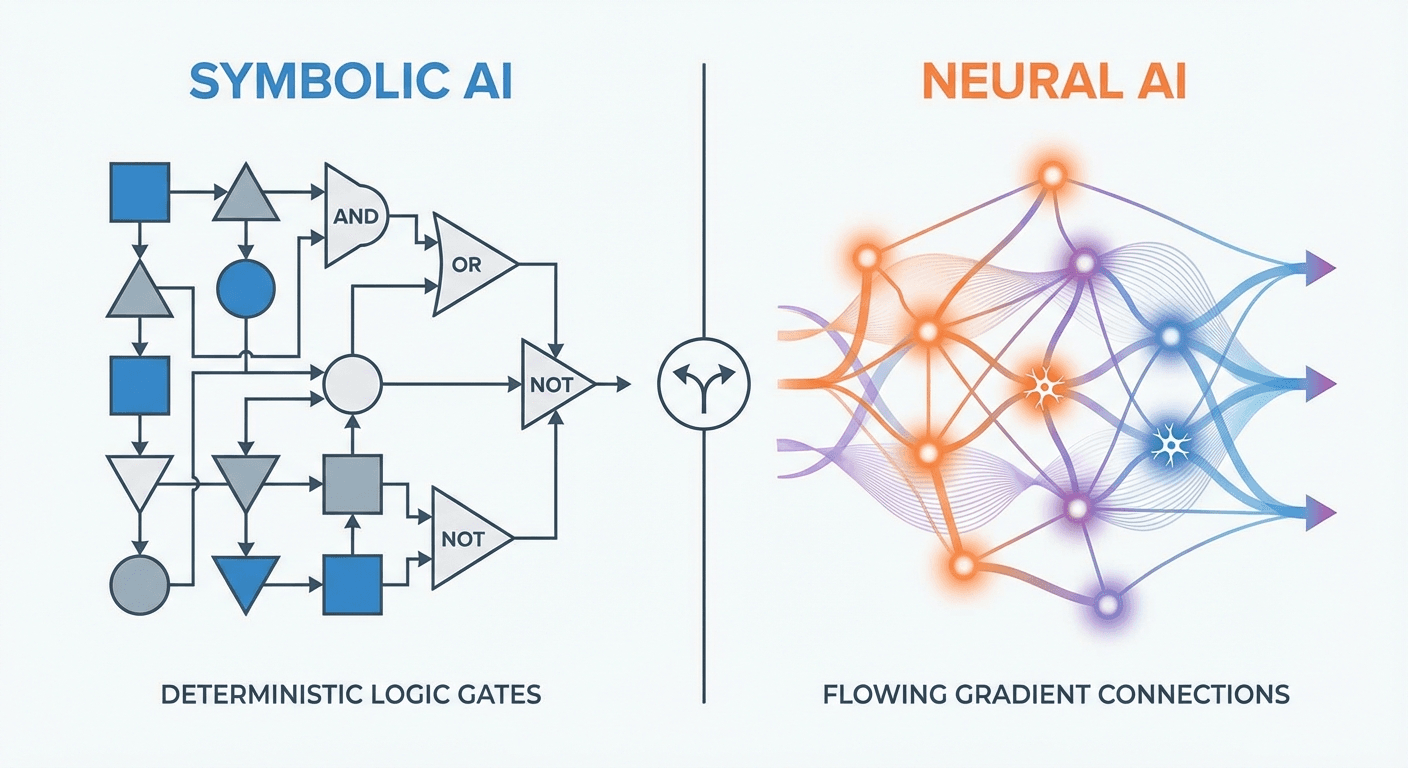

The Dual-Paradigm Framework: Symbolic vs. Neural Agentic AI

The most significant contribution of this agentic AI survey is its dual-paradigm taxonomy, which categorizes agentic systems into two distinct architectural lineages. Understanding these lineages is essential for anyone building, evaluating, or deploying AI agent systems, as the choice between paradigms carries profound implications for reliability, adaptability, and governance.

The Symbolic/Classical paradigm relies on algorithmic planning, explicit logic, and persistent state management. Rooted in the expert systems and rule-based approaches of the 1950s through 1980s, symbolic agentic AI uses frameworks like the Belief-Desire-Intention (BDI) model and perceive-plan-act-reflect (PPAR) loops. These systems excel in environments where deterministic behavior, explainability, and formal verification are paramount. Clinical decision support systems in healthcare, for instance, often leverage symbolic approaches because every reasoning step can be traced and audited.

The Neural/Generative paradigm leverages stochastic generation and prompt-driven orchestration, built upon the foundation of large language models and transformer architectures. Modern frameworks like LangChain, AutoGen, and CrewAI achieve agency through prompt chaining, conversation orchestration, and dynamic context management—mechanisms fundamentally different from symbolic planning. This paradigm dominates in adaptive, data-rich environments where flexibility and natural language understanding are critical. As our analysis of large language model capabilities and limitations explores, the neural paradigm’s strengths come with distinct trade-offs in reliability and interpretability.

Historical Evolution: From Expert Systems to Agentic AI Agents

The survey maps agentic AI’s evolution through five distinct but overlapping eras, providing essential context for understanding why the dual-paradigm framework matters. The Symbolic AI Era (1950s–1980s) established AI’s foundational ambition through rule-based and expert systems like MYCIN and DENDRAL. The Machine Learning Era (1980s–2010s) shifted toward data-driven approaches with statistical models, followed by the Deep Learning Era (2010s–present) which leveraged neural networks for automatic feature learning.

The Generative AI Era (2014–present) proved pivotal. The introduction of the transformer architecture in 2017 enabled scaling of large language models like GPT and BERT, moving AI beyond perception into generation—producing coherent text, code, and media. This era provided the essential substrate for modern agentic AI: a powerful, general-purpose statistical reasoner capable of understanding and generating natural language at unprecedented quality.

The current Agentic AI Era (2022–present) represents the frontier where generative capabilities are harnessed for autonomous action. Systems like AutoGPT demonstrated that LLMs could pursue goals through planning and tool use. Frameworks like CrewAI and AutoGen enabled multi-agent collaboration with specialized roles. Critically, this era’s neural paradigm is not a linear descendant of symbolic AI—it is built upon a completely different architectural foundation, which is precisely why the dual-paradigm framework is necessary to avoid analytical confusion.

📊 Explore this analysis with interactive data visualizations

Agentic AI Architectures: LangChain, AutoGen, and CrewAI

The architectural landscape of modern agentic AI is dominated by neural-paradigm frameworks that achieve agency through fundamentally different mechanisms than their symbolic predecessors. Understanding these frameworks is essential for organizations deploying agentic AI systems at scale.

LangChain provides the foundational infrastructure for building LLM-powered agents through prompt chaining and tool integration. It enables developers to compose complex workflows by linking LLM calls with external data sources, APIs, and specialized tools. LangChain’s modular architecture allows agents to maintain conversational context, access vector databases for retrieval-augmented generation (RAG), and execute multi-step reasoning chains. The framework has become the de facto standard for single-agent applications where flexibility and extensibility are priorities.

AutoGen, developed by Microsoft Research, introduces a conversation-centric paradigm for multi-agent orchestration. Rather than centralized control, AutoGen enables agents to collaborate through structured conversations, with each agent maintaining its own context and capabilities. This approach mirrors human team collaboration patterns and excels in complex problem-solving scenarios where diverse expertise must be coordinated. The framework’s ability to incorporate human-in-the-loop feedback at any stage makes it particularly valuable for enterprise deployments requiring oversight.

CrewAI takes a role-based approach to multi-agent systems, enabling developers to define agents with specific roles, goals, and backstories. This anthropomorphic design pattern makes it intuitive to architect complex workflows—assigning a “Researcher” agent, a “Writer” agent, and a “Quality Assurance” agent to collaborate on knowledge-intensive tasks. CrewAI’s emphasis on agent autonomy within defined roles strikes a balance between control and flexibility. For a deeper exploration of how these agent architectures connect to broader AI capabilities, see our guide on agent skills for large language models.

Multi-Agent Systems: Orchestration and Collaboration Patterns

Multi-agent systems (MAS) represent the most sophisticated expression of agentic AI, and the survey identifies several critical orchestration patterns that determine system behavior and effectiveness. The transition from single-agent to multi-agent architectures introduces coordination challenges that require careful architectural decisions about communication protocols, conflict resolution, and task allocation.

The survey categorizes MAS coordination into three primary patterns. Hierarchical orchestration employs a supervisor agent that delegates tasks to specialized worker agents, maintaining centralized control over workflow execution. This pattern is well-suited for structured business processes where task dependencies are well-defined. Peer-to-peer collaboration enables agents to communicate directly without central coordination, supporting emergent problem-solving in dynamic environments. Hybrid approaches combine elements of both, using hierarchical structure for high-level planning while allowing peer-to-peer interaction for execution details.

A critical finding of the survey is that multi-agent systems introduce unique challenges around emergent behavior—outcomes that arise from agent interactions but were not explicitly programmed. In neural-paradigm MAS, where each agent’s behavior is stochastically generated, the composition of multiple agents can produce unpredictable and sometimes undesirable emergent behaviors. This challenge represents one of the most active areas of research in agentic AI, with implications for safety, reliability, and trust in deployed systems.

Agentic AI in Healthcare: Safety-Critical Applications

The survey’s domain analysis reveals that healthcare represents a paradigmatic case for understanding how application constraints dictate agentic AI paradigm selection. In medical settings, the symbolic paradigm continues to dominate because of its deterministic behavior, explainability, and auditability—properties essential when decisions affect patient outcomes and are subject to regulatory scrutiny.

Clinical decision support systems powered by symbolic agentic AI can trace every reasoning step from patient data through diagnosis to treatment recommendation, providing clinicians with transparent explanations that support informed decision-making. These systems leverage structured medical ontologies, evidence-based clinical guidelines, and formal logic to ensure that recommendations are both medically sound and legally defensible.

However, the survey identifies an emerging trend toward neural integration in healthcare agentic systems. Natural language processing capabilities enabled by LLM-based agents are proving valuable for tasks like medical literature synthesis, patient communication, and unstructured data analysis from clinical notes. The challenge lies in creating hybrid systems that leverage neural capabilities for perception and communication while maintaining symbolic guarantees for clinical reasoning—a frontier that demands the neuro-symbolic approaches discussed in the survey’s research roadmap.

📊 Explore this analysis with interactive data visualizations

Agentic AI in Finance: Adaptive Intelligence for Data-Rich Markets

Financial services present a contrasting paradigm preference to healthcare. The survey demonstrates that neural agentic AI systems prevail in finance due to the domain’s adaptive, data-rich environments where rapid pattern recognition and flexible response to changing conditions are paramount. Algorithmic trading systems, fraud detection networks, and risk assessment platforms all benefit from the neural paradigm’s ability to process unstructured data and identify subtle patterns at scale.

Modern financial agentic AI systems deploy multi-agent architectures where specialized agents monitor different data streams—market data, news feeds, social sentiment, macroeconomic indicators—and collaborate to generate trading signals or risk assessments. The neural paradigm’s tolerance for ambiguity and its ability to synthesize information from diverse sources makes it naturally suited to financial markets where certainty is elusive and speed is essential.

Yet the survey also identifies significant governance challenges in financial agentic AI. The stochastic nature of neural agents means that identical market conditions may produce different trading decisions, creating accountability challenges for regulators. Our analysis of the Financial Services Regulatory Outlook 2026 explores how regulatory frameworks are evolving to address AI-driven decision-making in financial services. The survey argues that financial applications of agentic AI would benefit from neuro-symbolic hybrid approaches that combine neural perception with symbolic guardrails for compliance and risk management.

AI Governance and Ethics: Paradigm-Specific Challenges

One of the survey’s most valuable contributions is its paradigm-aware analysis of governance and ethical challenges. Rather than treating AI governance as a monolithic concern, the authors demonstrate that symbolic and neural agentic AI systems present fundamentally different risk profiles requiring distinct mitigation strategies.

For symbolic agentic systems, governance challenges center on brittleness—the inability to handle situations not anticipated by rule designers—and the difficulty of maintaining rule bases as domain knowledge evolves. However, symbolic systems offer strong auditability and deterministic behavior, making compliance verification straightforward. The survey identifies a significant deficit in governance models specifically designed for symbolic systems, which paradoxically may need less governance infrastructure precisely because their behavior is more predictable.

For neural agentic systems, governance challenges are more fundamental. The stochastic nature of LLM-based agents means behavior is inherently non-deterministic—the same input may produce different outputs across runs. This creates profound challenges for accountability, reproducibility, and formal verification. As explored in our AI alignment taxonomy guide, ensuring that neural agents reliably pursue intended goals while avoiding harmful behaviors remains one of AI’s most pressing challenges. The survey identifies prompt injection, hallucination, and emergent misalignment in multi-agent systems as critical risks requiring new governance frameworks.

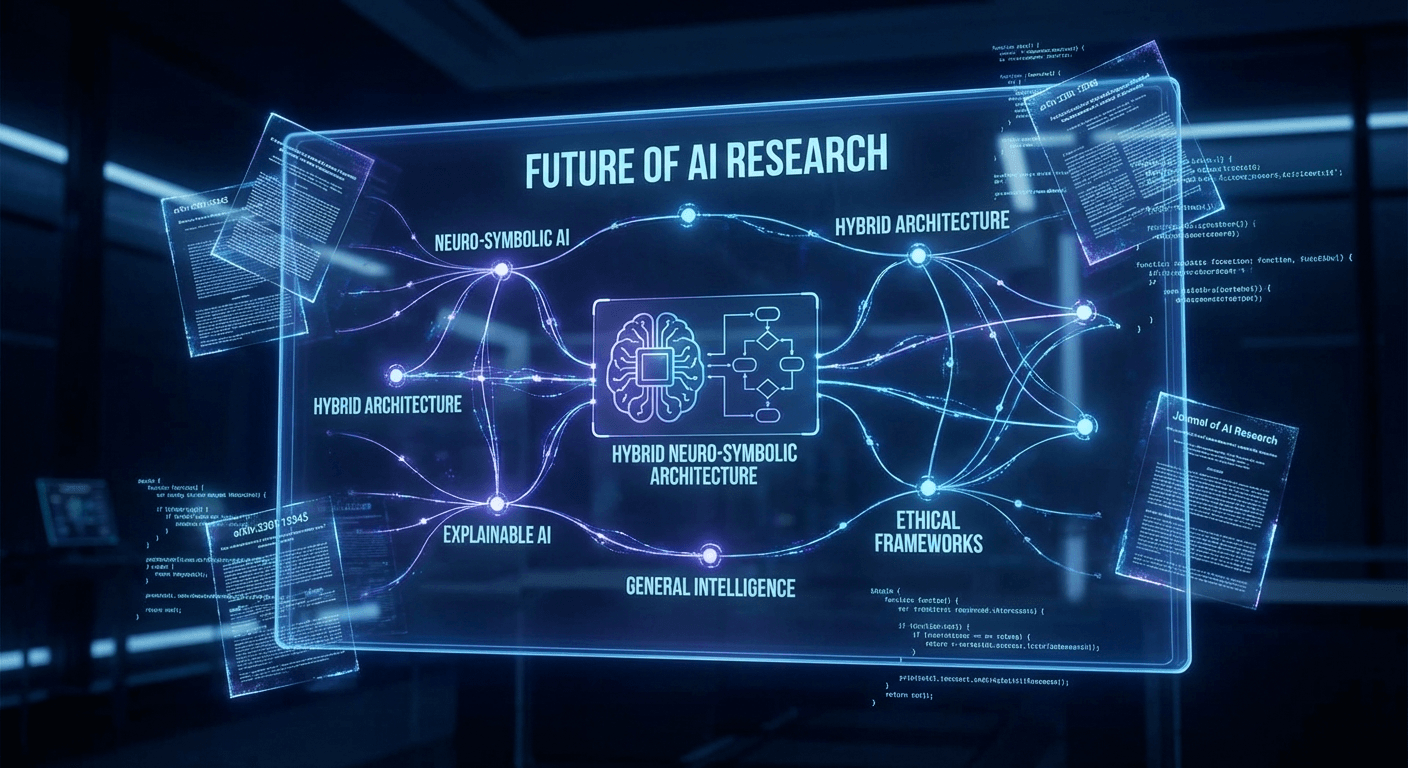

The Neuro-Symbolic Future: Hybrid Agentic AI Systems

The survey’s most forward-looking contribution is its strategic roadmap arguing that the future of agentic AI lies not in the dominance of either paradigm, but in their intentional integration. Hybrid neuro-symbolic architectures promise systems that are both adaptable (leveraging neural flexibility) and reliable (maintaining symbolic guarantees)—a combination essential for deploying agentic AI in the real world at scale.

Proposed hybrid architectures include neural perception with symbolic reasoning, where LLM-based agents handle natural language understanding and unstructured data processing while symbolic systems manage logical inference and constraint satisfaction. Another approach is symbolic scaffolding for neural agents, where formal specifications and rules provide guardrails that constrain neural agent behavior within safe bounds while allowing flexibility within those bounds. A third approach involves learned symbolic representations, where neural systems automatically discover and maintain symbolic structures that support transparent reasoning.

The survey identifies several critical research gaps that must be addressed for this neuro-symbolic vision to be realized. These include the development of standardized benchmarks for evaluating hybrid systems, the creation of governance frameworks that account for both paradigms, and the engineering of interfaces that enable seamless interaction between symbolic and neural components. The efficient LLM architectures survey in our library provides complementary research on the computational foundations that will enable these hybrid systems. For organizations building AI strategy, understanding these paradigm dynamics is essential—as our analysis of McKinsey’s State of AI 2024 confirms, agentic AI adoption is accelerating across industries and the architecture choices made today will determine system capabilities for years to come.

Research Gaps and Critical Directions for Agentic AI

The systematic review identifies several critical research gaps that represent both challenges and opportunities for the agentic AI community. First, there is a significant imbalance in governance research: while neural systems receive extensive attention regarding safety and alignment, symbolic systems lack dedicated governance models despite their continued importance in safety-critical applications.

Second, the survey highlights a benchmarking deficit for multi-agent systems. Current evaluation methodologies are largely designed for single-agent performance, failing to capture the emergent behaviors, coordination efficiency, and collective intelligence that define MAS effectiveness. Standardized benchmarks that measure both individual agent capability and system-level outcomes are urgently needed.

Third, the scalability of agentic systems remains underexplored. While existing frameworks like AutoGen and CrewAI demonstrate multi-agent collaboration with small teams (typically 3-10 agents), the behavior of systems with hundreds or thousands of agents is largely unknown. Questions about communication overhead, coordination degradation, and emergent instability at scale represent critical open problems, as discussed in recent DeepMind research on multi-agent coordination.

Fourth, real-world deployment evidence is sparse. The majority of studies reviewed operate in controlled experimental settings, with limited data on how agentic AI systems perform in production environments with noisy data, adversarial inputs, and long-running operations. Closing this gap between research and deployment is essential for the field’s maturation.

Implications for Enterprise AI Strategy

For organizations evaluating agentic AI for deployment, this survey provides an essential conceptual toolkit. The dual-paradigm framework enables more precise assessment of which architectural approach suits specific use cases—a strategic decision with long-term implications for system reliability, maintainability, and governance compliance.

The survey’s finding that paradigm selection is strategic rather than technical—symbolic systems for safety-critical domains, neural systems for adaptive environments—offers a clear decision framework for enterprise architects. Organizations in regulated industries (healthcare, financial services, legal) should carefully evaluate whether neural agentic systems meet their compliance requirements or whether symbolic or hybrid approaches are necessary. As highlighted in the Accenture Technology Vision 2025, enterprises that make informed architectural decisions about AI deployment will capture disproportionate value.

The survey also underscores the importance of investing in governance infrastructure alongside agentic AI capabilities. As multi-agent systems become more sophisticated, the organizations that have established robust monitoring, auditing, and control mechanisms will be best positioned to scale their agentic AI deployments safely and effectively. The transition from experimental AI projects to production agentic systems requires not just technical capability but organizational readiness—including updated risk frameworks, new operational procedures, and evolved talent models.

📊 Explore this analysis with interactive data visualizations

Frequently Asked Questions

What is agentic AI and how does it differ from traditional AI?

Agentic AI refers to AI systems that exhibit genuine agency—capable of proactive planning, contextual memory, tool use, and adaptive behavior. Unlike traditional AI which performs narrow, task-specific functions, agentic AI systems can autonomously pursue goals, orchestrate multi-step workflows, and collaborate with other agents to solve complex problems.

What are the two main paradigms of agentic AI architecture?

The two paradigms are the Symbolic/Classical lineage, which relies on algorithmic planning, explicit logic, and persistent state management, and the Neural/Generative lineage, which leverages stochastic generation, prompt-driven orchestration, and large language models (LLMs). Modern agentic AI primarily operates within the neural paradigm.

What are the key frameworks for building agentic AI systems?

Key frameworks include LangChain for prompt chaining and tool integration, AutoGen for multi-agent conversation orchestration, and CrewAI for role-based agent collaboration. These neural-paradigm frameworks achieve agency through prompt engineering, dynamic context management, and API orchestration rather than traditional symbolic planning.

How is agentic AI applied in healthcare and finance?

In healthcare, symbolic agentic systems dominate due to safety-critical requirements, handling clinical decision support and treatment planning with deterministic reliability. In finance, neural agentic systems prevail in adaptive, data-rich environments for algorithmic trading, risk assessment, and fraud detection where rapid pattern recognition is essential.

What is neuro-symbolic AI and why is it the future of agentic systems?

Neuro-symbolic AI combines the strengths of both paradigms—the reliability and explainability of symbolic systems with the adaptability and learning capabilities of neural systems. Research indicates this hybrid approach is essential for creating agentic AI that is both adaptable and trustworthy, particularly in safety-critical domains.