DeepSeek-R1 Reinforcement Learning: How Pure RL Is Revolutionizing AI Reasoning

By Libertify Research

·

March 9, 2026

·

12 min read

Table of Contents

- The Reasoning Revolution in AI

- What Is DeepSeek-R1? Architecture and Design

- DeepSeek-R1 Reinforcement Learning Breakthrough: How R1-Zero Learns to Reason

- The “Aha Moment” — When AI Learns to Think

- DeepSeek-R1’s Four-Stage Training Pipeline

- Benchmark Results: DeepSeek-R1 vs OpenAI o1

- DeepSeek-R1 Distillation: Making Small Reasoning Models Powerful

- What DeepSeek-R1 Reinforcement Learning Means for AI’s Future

📌 Key Takeaways

- Pure RL reasoning: DeepSeek-R1 proves that large language models can develop complex reasoning purely through reinforcement learning — without human-annotated demonstrations.

- Matches OpenAI o1 at 95% less cost: DeepSeek-R1 achieves comparable performance to OpenAI o1-1217 on math, coding, and science benchmarks while costing just $0.55 per million input tokens.

- Emergent “aha moment”: During training, the model spontaneously develops self-reflection, strategy adaptation, and extended thinking — behaviors that were never explicitly programmed.

- Open source under MIT license: Full model weights, six distilled models (1.5B–70B), and training methodology are freely available, enabling unprecedented access to frontier reasoning capabilities.

- Published in Nature: The research was published in Nature (vol. 645), marking rare recognition for an AI systems paper and validating the scientific rigor of the approach.

1. The Reasoning Revolution in AI

DeepSeek-R1 reinforcement learning represents a paradigm shift in how AI systems learn to reason — not by studying thousands of carefully curated examples, but simply by being rewarded for getting the right answer. This breakthrough, which sounds almost paradoxical, is at the heart of one of the most significant advances in modern AI research.For years, the dominant approach to building smarter language models followed a predictable formula: pretrain on massive text corpora, then fine-tune with human-written demonstrations of “good” reasoning. OpenAI’s o1 model, released in late 2024, demonstrated that chain of thought reasoning could dramatically boost performance on complex math and coding tasks. But the methodology behind o1 remained proprietary — a black box that the broader research community could study only through its outputs.Then came DeepSeek-R1. Developed by DeepSeek-AI, a team of over 200 researchers, this model proved something remarkable: DeepSeek-R1 reinforcement learning can unlock sophisticated reasoning capabilities without any supervised fine-tuning at all. The model learns to think, reflect, and self-correct purely through reward signals — and it does so at a fraction of the cost of its competitors.Published in Nature (vol. 645, pp. 633–638) — a rare distinction for an AI systems paper — DeepSeek-R1 is not just another large language model. It represents a fundamental shift in how we think about training AI to reason. And because it is fully open source under the MIT license, its impact extends far beyond a single lab. As the Stanford AI Index Report 2025 highlights, open-source AI models are rapidly closing the gap with proprietary systems — and DeepSeek-R1 is perhaps the most compelling evidence yet.

2. What Is DeepSeek-R1? Architecture and Design

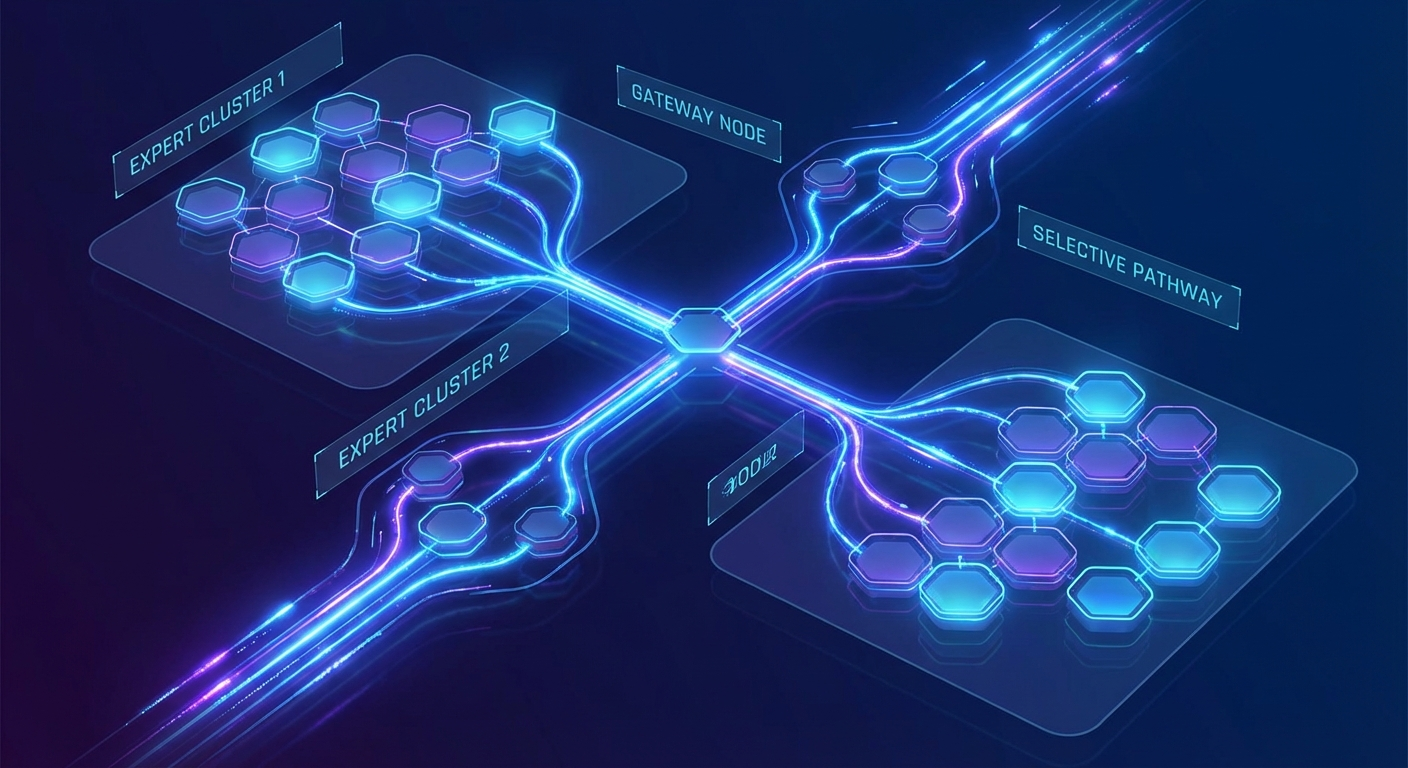

At its foundation, the DeepSeek R1 LLM is built on DeepSeek-V3-Base, a 671-billion-parameter Mixture-of-Experts (MoE) architecture. Unlike dense models where every parameter activates for every token, MoE architectures selectively activate subsets of experts for each input — enabling massive scale without proportionally massive compute costs.The research team introduced not one but two models to validate their thesis:- DeepSeek-R1-Zero: The proof-of-concept. This model was trained with pure reinforcement learning directly on the base model — no supervised fine-tuning, no human reasoning demonstrations. Its purpose was to answer a fundamental question: can RL alone produce genuine reasoning?

- DeepSeek-R1: The production-ready model. Building on insights from R1-Zero, this version incorporates a cold-start phase and a multi-stage training pipeline to optimize readability, accuracy, and alignment while preserving the RL-driven reasoning core.

3. DeepSeek-R1 Reinforcement Learning Breakthrough: How R1-Zero Learns to Reason

The most scientifically significant aspect of this research is what R1-Zero demonstrates about the nature of reasoning in language models. Before this work, the prevailing assumption was clear: to teach a model to reason step-by-step, you need to show it examples of step-by-step reasoning. R1-Zero shattered that assumption.Starting from the raw DeepSeek-V3-Base model — which had been pretrained on text but never fine-tuned for reasoning tasks — the team applied large-scale reinforcement learning with a deliberately simple reward system:- Accuracy rewards: For math problems, deterministic verification (is the final answer correct?). For code, compiler feedback (does it pass the test cases?).

- Format rewards: The model must structure its output using

<think>and</think>tags to separate reasoning from the final answer.

Exploring how AI is transforming industries? Discover Libertify’s interactive research experiences on the latest breakthroughs.Explore Library →

4. The “Aha Moment” — When AI Learns to Think

Perhaps the most captivating finding from the DeepSeek-R1 research is what the team calls the “aha moment” — a phase during RL training when the model spontaneously develops the ability to pause, reevaluate its reasoning, and try a completely different approach when the current one fails.This wasn’t programmed. No one wrote a rule saying “if stuck, backtrack and reconsider.” The behavior emerged from the reward signal alone. During training, the researchers observed R1-Zero’s responses evolve: early in training, the model would commit to a single reasoning chain and follow it to the end, right or wrong. As training progressed, something changed. The model began inserting pauses in its chain of thought — moments where it would write something like “Wait, let me reconsider this step” — before pivoting to an alternative strategy. Three distinct emergent behaviors were documented:

Three distinct emergent behaviors were documented:- Self-reflection: The model revisits and critically evaluates its own prior reasoning steps, identifying logical gaps or errors.

- Dynamic strategy adaptation: When one problem-solving approach fails, the model switches to an entirely different method — for example, moving from algebraic manipulation to geometric reasoning.

- Extended test-time computation: Response length grows naturally during training, from hundreds to thousands of tokens, as the model learns that “thinking longer” on harder problems produces better outcomes.

5. DeepSeek-R1’s Four-Stage Training Pipeline

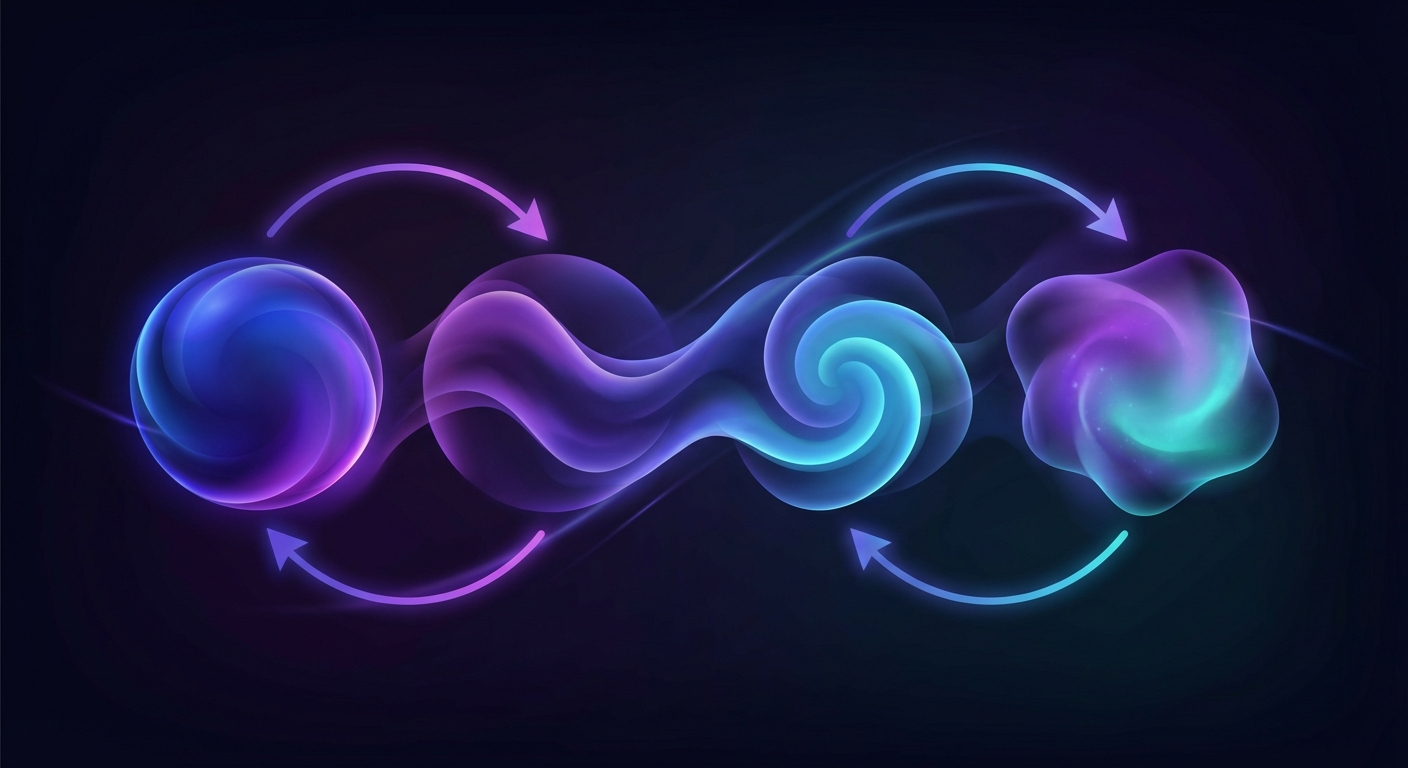

While R1-Zero proved the concept, the production DeepSeek-R1 model required a more structured approach to achieve both high performance and good user experience. This reinforcement learning methodology was refined into a four-stage training pipeline:Stage 1: Cold Start

Thousands of long chain-of-thought examples were used to fine-tune DeepSeek-V3-Base as the initial RL actor. This cold-start data addressed one of R1-Zero’s key weaknesses: while R1-Zero’s reasoning was powerful, its outputs were often poorly formatted, mixed languages mid-response, and were difficult for humans to follow. The cold-start data established baseline readability without compromising the model’s reasoning potential.Stage 2: Reasoning-Oriented RL

The same large-scale reinforcement learning process used for R1-Zero was applied, focused specifically on math, coding, science, and logic tasks. This is where the model develops its core reasoning capabilities through GRPO with rule-based rewards.Stage 3: Rejection Sampling + SFT

Approximately 600,000 reasoning samples were generated through rejection sampling (keeping only high-quality outputs) and combined with roughly 200,000 non-reasoning samples covering writing, question-answering, and role-play. This ~800,000-sample dataset was used for supervised fine-tuning over 2 epochs, broadening the model’s capabilities beyond pure reasoning.Stage 4: RL for All Scenarios

A second RL phase optimized the model for helpfulness and harmlessness across all use cases. This stage used reward models for general tasks and rule-based rewards for reasoning tasks — ensuring the model remains aligned and safe while preserving its reasoning prowess. This pipeline represents a masterclass in practical ML engineering. Each stage addresses a specific weakness while preserving the gains from previous stages. For ML engineers designing their own training pipelines, the original paper on arXiv provides granular implementation details that are rare in proprietary research.

This pipeline represents a masterclass in practical ML engineering. Each stage addresses a specific weakness while preserving the gains from previous stages. For ML engineers designing their own training pipelines, the original paper on arXiv provides granular implementation details that are rare in proprietary research.Want to understand the full landscape of AI innovation in 2025–2026? Explore our interactive analysis of the Stanford AI Index.Read the Analysis →

6. Benchmark Results: DeepSeek-R1 vs OpenAI o1

Numbers matter. The results speak for themselves. Here’s how DeepSeek R1 LLM stacks up against OpenAI o1-1217 across the most demanding AI benchmarks:| Benchmark | DeepSeek-R1 | OpenAI o1-1217 | Winner |

|---|---|---|---|

| AIME 2024 (Pass@1) | 79.8% | 79.2% | R1 |

| MATH-500 | 97.3% | 96.4% | R1 |

| Codeforces (Elo) | 2,029 (>96.3%) | 96.6th percentile | R1 |

| MMLU | 90.8% | 91.8% | o1 |

| GPQA Diamond | 71.5% | — | — |

| AlpacaEval 2.0 | 87.6% win rate | — | — |

| SWE-Bench Verified | Topped o1 | — | R1 |

| Model | Input (per M tokens) | Output (per M tokens) |

|---|---|---|

| OpenAI o1 | $15.00 | $60.00 |

| DeepSeek-R1 API | $0.55 | $2.19 |

| Savings | 96.3% | 96.4% |

7. DeepSeek-R1 Distillation: Making Small Reasoning Models Powerful

Not every use case can accommodate a 671B-parameter model. Recognizing this, DeepSeek-AI released six distilled dense models ranging from 1.5B to 70B parameters, based on the Qwen2.5 and Llama3 model families:| Distilled Model | AIME 2024 | MATH-500 |

|---|---|---|

| R1-Distill-Qwen-7B | 55.5% | — |

| R1-Distill-Qwen-32B | 72.6% | 94.3% |

| R1-Distill-Llama-70B | 86.7% | 94.5% |

See how Libertify transforms complex AI research into interactive experiences your team will actually engage with.Start Free →

8. What DeepSeek-R1 Reinforcement Learning Means for AI’s Future

The significance of DeepSeek-R1 extends well beyond its benchmark scores. It crystallizes several tectonic shifts in the AI landscape that practitioners, leaders, and investors need to internalize.The Open-Source vs. Closed-Source Reckoning

When an open-source model matches a leading proprietary system at 95% lower cost, the competitive dynamics of the AI industry shift fundamentally. Organizations that built their AI strategies around exclusive access to frontier capabilities must now reckon with a world where those capabilities are freely available. This doesn’t eliminate the value of proprietary models — but it compresses the window during which proprietary advantage translates to market dominance.RL as the New Training Paradigm

DeepSeek-R1 reinforcement learning validates a hypothesis that many researchers held but few had proven at scale: that the right reward signal, applied at sufficient scale, can produce capabilities previously thought to require explicit instruction. This has profound implications for the next generation of AI systems. If reasoning can emerge from RL, what other cognitive capabilities might follow? Planning? Creativity? Scientific discovery?The Transparency Premium

DeepSeek-AI’s willingness to publish what didn’t work — Process Reward Models, Monte Carlo Tree Search, neural reward models at scale — is scientifically invaluable. In a field where negative results rarely see the light of day, this transparency accelerates the entire community. It’s a model (no pun intended) that other labs would do well to emulate.Practical Takeaways

For AI practitioners: GRPO is a proven, cost-effective RL algorithm for developing AI reasoning capabilities. Rule-based rewards beat neural reward models at scale. Start with distilled models for prototyping — the 14B and 32B variants offer excellent performance-to-cost ratios.For tech leaders: Reassess your model vendor strategy. The 95% cost differential is not a rounding error — it’s a strategic advantage. Evaluate self-hosting distilled models for latency-sensitive or high-volume reasoning workloads.For investors: The moat around proprietary reasoning models just got thinner. Look for companies building differentiated applications on top of open reasoning models rather than competing on model capability alone. DeepSeek’s publication in Nature signals that the scientific legitimacy of open-source frontier research is established and growing.The story of DeepSeek-R1 is ultimately about a simple, powerful idea: give a model the right incentive, and it will learn to think. No demonstrations required. No hand-holding. Just reward and scale. In that simplicity lies a revolution — one that is open source, peer-reviewed, and available to everyone.Frequently Asked Questions

What is DeepSeek-R1 and how does it differ from OpenAI o1?

DeepSeek-R1 is an open-source large language model developed by DeepSeek-AI that achieves reasoning performance comparable to OpenAI o1-1217 on math, coding, and science benchmarks. The key difference is that DeepSeek-R1 is released under the MIT license with full model weights available, and its API costs 95% less than OpenAI o1 — $0.55 per million input tokens versus $15 for o1.How does reinforcement learning improve AI reasoning in DeepSeek-R1?

DeepSeek-R1 uses Group Relative Policy Optimization (GRPO) to train the model with rule-based rewards for accuracy and formatting. Instead of requiring human-annotated reasoning demonstrations, the model learns to reason purely through RL incentives — developing chain of thought reasoning, self-reflection, and dynamic strategy adaptation as emergent behaviors during training.Is DeepSeek-R1 open source and how much does it cost?

Yes, DeepSeek-R1 is fully open source under the MIT license. Model weights, distilled models, and training methodology are publicly available on GitHub and Hugging Face. The API is priced at $0.55 per million input tokens and $2.19 per million output tokens — representing a 95–96% cost reduction compared to OpenAI o1.What is the “aha moment” in DeepSeek-R1 training?

The “aha moment” refers to a phase during reinforcement learning training where DeepSeek-R1-Zero spontaneously learns to pause its reasoning, reevaluate previous steps, and try alternative approaches — without being explicitly programmed to do so. This emergent self-reflection behavior demonstrates that complex reasoning patterns can arise purely from RL reward signals.Can DeepSeek-R1’s reasoning capabilities be used in smaller models?

Yes. DeepSeek-AI released six distilled models ranging from 1.5B to 70B parameters based on Qwen2.5 and Llama3 architectures. Notably, the distilled 7B model surpasses the 32B QwQ-Preview on AIME 2024, proving that distillation from a large reasoning model is more effective than applying RL directly to smaller models.What algorithm does DeepSeek-R1 use for reinforcement learning?

DeepSeek-R1 uses Group Relative Policy Optimization (GRPO), a novel RL algorithm that eliminates the need for a separate critic model. GRPO estimates baselines from group scores across multiple sampled outputs, reducing training compute and memory requirements while enabling effective reinforcement learning at the 671-billion-parameter scale.Related Articles

Your documents deserve to be read.

PDFs get ignored. Presentations get skipped. Reports gather dust.

Libertify transforms them into interactive experiences people actually engage with.

No credit card required · 30-second setup