Everything About Transformers: The AI Architecture That Changed the World

Table of Contents

📌 Key Takeaways

- Self-attention is revolutionary — every token can directly attend to every other token, capturing long-range dependencies that RNNs struggle with

- Parallel processing enables transformers to train much faster than sequential models like LSTMs

- Every major LLM from GPT to Claude to Gemini is transformer-based — understanding transformers means understanding modern AI

- Scaling laws show performance improves predictably with more data, compute, and parameters

- Quadratic complexity with sequence length remains the key limitation driving current research

- Multi-head attention allows the model to focus on different types of relationships simultaneously

What Are Transformers?

If you’ve used ChatGPT, Claude, Gemini, or any modern AI tool, you’ve interacted with a transformer. The transformer architecture is the backbone of nearly every breakthrough in artificial intelligence since 2017 — from language models that write code to systems that predict protein structures.

But what exactly is a transformer? At its core, it’s a neural network architecture designed to process sequences of data (text, images, audio, DNA) by learning which parts of the input are most relevant to each other. Unlike previous approaches that processed data step-by-step, transformers process everything simultaneously — and that single change unlocked capabilities no one anticipated.

The original paper — “Attention Is All You Need” by Vaswani et al. (2017) — came from Google Brain. It was written to improve machine translation. Instead, it triggered an AI revolution.

The Attention Mechanism: Why It Changed Everything

The key insight behind transformers is the self-attention mechanism. Here’s the intuition:

When you read the sentence “The cat sat on the mat because it was tired”, you instantly know that “it” refers to “the cat.” Your brain doesn’t process words left-to-right and hope the connection survives — it directly links related words regardless of their position.

Self-attention does exactly this, but mathematically. For every token in a sequence, it computes:

- Query (Q) — “What am I looking for?”

- Key (K) — “What do I contain?”

- Value (V) — “What information do I provide?”

The attention score between any two tokens is computed as the dot product of the query and key, scaled and passed through a softmax function. High attention scores mean “these tokens are strongly related” — the model learns these relationships during training.

“The transformer’s ability to attend to any position in the input sequence in a single step — rather than requiring information to propagate through many sequential steps — is its fundamental advantage.” — Ashish Vaswani, Lead Author

Turn your technical documentation into interactive experiences that explain themselves.

Architecture Deep Dive

The full transformer architecture has two main components:

The Encoder

Processes the input sequence and produces a rich representation of each token in context. Used in models like BERT. Each encoder layer applies:

- Multi-head self-attention — each token attends to all other input tokens

- Feed-forward network — processes each token independently through two linear layers with a ReLU activation

- Layer normalization + residual connections — stabilize training and allow gradient flow

The Decoder

Generates output tokens one at a time, attending to both the encoder output and previously generated tokens. Used in GPT-style models. Each decoder layer adds:

- Masked self-attention — each token can only attend to previous tokens (prevents “looking ahead”)

- Cross-attention — attends to the encoder output (in encoder-decoder models)

- Feed-forward network — same as encoder

Modern LLMs like GPT-4, Claude, and Llama use decoder-only architectures — they dropped the encoder entirely and rely solely on masked self-attention plus massive scale.

Multi-Head Attention

Rather than computing attention once, transformers use multi-head attention — running several attention mechanisms in parallel. Each “head” can learn to focus on different types of relationships:

- Head 1 might learn syntactic relationships (subject-verb agreement)

- Head 2 might learn semantic relationships (synonym patterns)

- Head 3 might learn positional patterns (nearby words)

- Head 4 might learn long-range dependencies (pronoun resolution)

This multi-perspective approach is why transformers are so expressive — they don’t just model one type of relationship, they model many simultaneously.

Complex AI concepts need clear explanations. Transform any document into an engaging experience.

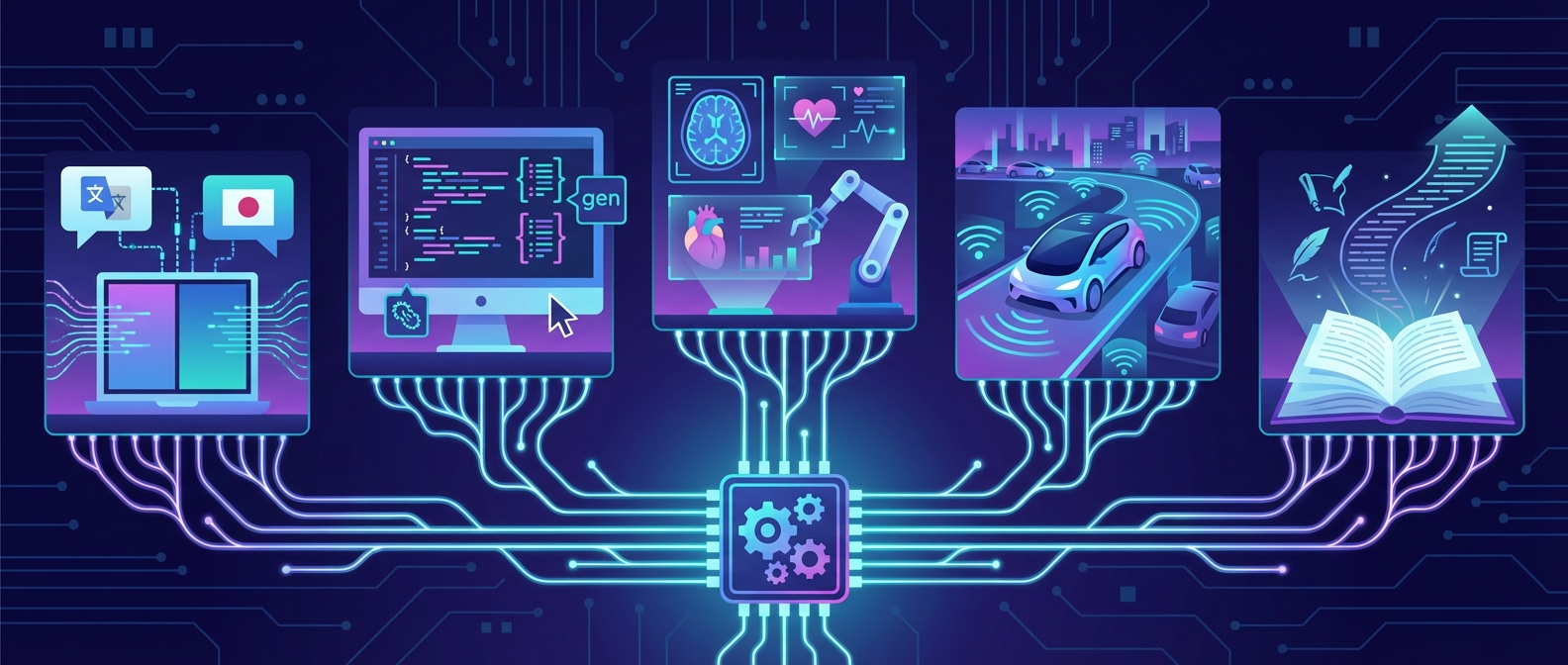

Real-World Applications

Transformers have conquered virtually every domain in AI:

- Natural Language Processing — GPT-4, Claude, Gemini (text generation, reasoning, coding) — all now subject to the EU AI Act

- Computer Vision — Vision Transformer (ViT) treats image patches as tokens, matching or exceeding CNNs

- Protein Folding — AlphaFold 2 uses transformers to predict 3D protein structures with atomic accuracy

- Code Generation — Codex, GitHub Copilot, Cursor — all transformer-based

- Speech Recognition — Whisper processes audio spectrograms as sequences

- Robotics — RT-2 from Google DeepMind uses transformers for robot control

- Drug Discovery — Molecular transformers predict drug interactions and properties

- Music Generation — MusicLM, Suno, and Udio generate music from text descriptions

The pattern is clear: any problem that can be framed as sequence processing can benefit from transformers.

Transformers vs Previous Architectures

| RNN/LSTM | CNN | Transformer | |

|---|---|---|---|

| Processing | Sequential | Local windows | Fully parallel |

| Long-range deps | Struggles (vanishing gradient) | Limited by kernel size | Direct (any position) |

| Training speed | Slow (sequential) | Fast (parallel) | Fast (parallel) |

| Scalability | Poor | Good | Excellent |

| Context window | ~100-1000 tokens | Fixed receptive field | 128K-2M+ tokens |

Research papers getting lost in inboxes? Transform them into interactive experiences people actually read.

Limitations & Challenges

Transformers aren’t perfect. Their key limitations drive current research:

- Quadratic complexity — Self-attention scales O(n²) with sequence length. Processing 1M tokens requires 1 trillion attention computations. Solutions: sparse attention, linear attention, Mamba (state-space models).

- Massive compute requirements — Training GPT-4 reportedly cost $100M+. This concentrates AI development in a few well-funded labs, raising questions addressed by frameworks like the EU AI Act compliance requirements.

- Hallucinations — Transformers can generate confident, plausible-sounding text that’s factually wrong. They model patterns, not truth.

- No built-in reasoning — Despite appearances, transformers perform pattern matching, not logical deduction. Chain-of-thought prompting helps, but fundamental reasoning remains an open problem.

- Energy consumption — Running inference for billions of users requires enormous data centers and energy. Efficiency research (quantization, distillation, pruning) is critical.

Future Directions

The transformer architecture is evolving rapidly:

- Mixture of Experts (MoE) — Only activate a subset of parameters per token, enabling larger models with lower compute. Used in Mixtral and reportedly GPT-4.

- State-Space Models — Mamba and similar architectures offer linear scaling with sequence length, potentially replacing transformers for very long contexts.

- Multimodal transformers — GPT-4V, Gemini, and Claude 3 process text, images, audio, and video within a single transformer architecture.

- Retrieval-Augmented Generation (RAG) — Combining transformers with external knowledge bases to reduce hallucinations and stay current — a key concern for AI regulation and compliance.

- On-device models — Smaller, efficient transformers running locally on phones and laptops (Phi-3, Gemma, Llama 3).

Whether you’re a researcher, developer, or curious professional, the best way to understand transformers is to interact with them — not just read about them. The interactive experience above transforms dense research material into a navigable, self-explaining experience. Instead of reading a 40-page paper linearly, you can explore concepts, jump between sections, and let AI-generated summaries guide your understanding.

Frequently Asked Questions

What is the Transformer architecture?

The Transformer is a neural network architecture introduced in the 2017 paper “Attention Is All You Need” by Vaswani et al. Unlike previous sequential models (RNNs, LSTMs), Transformers process all input tokens simultaneously using a self-attention mechanism. This allows them to capture long-range dependencies efficiently and train much faster through parallelization. Every major large language model today — including GPT-4, Claude, Gemini, and Llama — is built on the Transformer architecture.

How does the attention mechanism work in Transformers?

The self-attention mechanism computes relationships between all pairs of tokens in a sequence. Each token generates three vectors: a Query (what it’s looking for), a Key (what it contains), and a Value (what information it provides). Attention scores are calculated as the scaled dot product of Query and Key vectors, then passed through a softmax function to create weights. These weights determine how much each token attends to every other token, allowing the model to learn contextual relationships regardless of distance in the sequence.

What is the difference between encoder and decoder in Transformers?

The encoder processes the full input sequence bidirectionally, allowing each token to attend to all other tokens — used in models like BERT for understanding tasks. The decoder generates output tokens auto-regressively (one at a time), using masked self-attention so each token can only attend to previous tokens. Encoder-decoder models (like the original Transformer) use both for tasks like translation. Modern LLMs like GPT-4 and Claude use decoder-only architectures, which have proven remarkably effective for text generation at scale.

Why are Transformers better than RNNs?

Transformers outperform RNNs in three key ways: (1) Parallelization — RNNs process tokens sequentially, while Transformers process all tokens simultaneously, enabling much faster training on modern GPUs. (2) Long-range dependencies — RNNs suffer from vanishing gradients over long sequences, while Transformers can directly attend to any position in a single step. (3) Scalability — Transformers scale efficiently with more data and compute, following predictable scaling laws that have driven the rapid improvement in AI capabilities since 2017.