EU AI Act Compliance Guide: Everything You Need to Know in 2026

The EU AI Act (Regulation EU 2024/1689) is the world’s first comprehensive artificial intelligence regulation — and as of 2026, its most consequential provisions are now in force. This guide breaks down everything you need to understand, from risk classifications to penalties reaching €35 million.

📌 Key Takeaways

- The EU AI Act entered into force on 1 August 2024, with provisions phasing in through 2027 — key obligations for high-risk systems apply from 2 August 2026.

- It uses a risk-based framework with four tiers: unacceptable (banned), high-risk (heavily regulated), limited risk (transparency obligations), and minimal risk (no specific rules).

- Eight AI practices are outright prohibited, including social scoring, subliminal manipulation, and untargeted facial recognition database scraping.

- High-risk AI systems face rigorous requirements: risk management, data governance, technical documentation, human oversight, accuracy, and cybersecurity.

- Six operator categories carry obligations — providers bear the heaviest burden, but deployers, importers, and distributors also have legal duties.

- General-purpose AI (GPAI) models, including large language models, have dedicated rules — with stricter requirements for models posing systemic risk.

- Penalties reach up to €35 million or 7% of worldwide annual turnover for the most serious violations.

- The Act has broad extraterritorial reach — it applies to non-EU companies whose AI systems or outputs are used within the EU.

What Is the EU AI Act?

Published in the Official Journal of the European Union on 12 July 2024, the EU AI Act (formally Regulation (EU) 2024/1689) establishes harmonized rules for the development, placement on the market, and use of artificial intelligence systems across all EU member states.

Unlike sector-specific regulations, the AI Act is technology-neutral and horizontal — it applies across all industries and all types of AI. It aligns its definition of an “AI system” with the OECD definition: a machine-based system that operates with varying autonomy, infers from inputs, and generates outputs (predictions, content, recommendations, or decisions) that influence physical or virtual environments.

A critical distinction: the Act targets systems that infer — meaning they generate their own rules from data. Purely rule-based software operating on pre-defined logic falls outside its scope. The Commission’s February 2025 guidelines confirmed, for example, that standard logistic regression (widely used in finance) does not qualify as an AI system under the Act.

Extraterritorial Reach

Like the GDPR before it, the EU AI Act extends far beyond European borders. It applies to:

- Any provider placing an AI system on the EU market or putting it into service in the EU — regardless of where they are established

- Any deployer established or located within the EU

- Non-EU providers whose AI system outputs are used within the EU

- GPAI model providers placing models on the EU market from anywhere in the world

This means a U.S. company whose AI-generated credit scores are relied upon by a European bank is subject to the Act — even if the AI system itself runs on servers in Virginia. For organizations exploring how AI integrates into business processes, understanding this regulatory landscape is essential — much like AI orchestration in customer success requires governance frameworks to operate effectively.

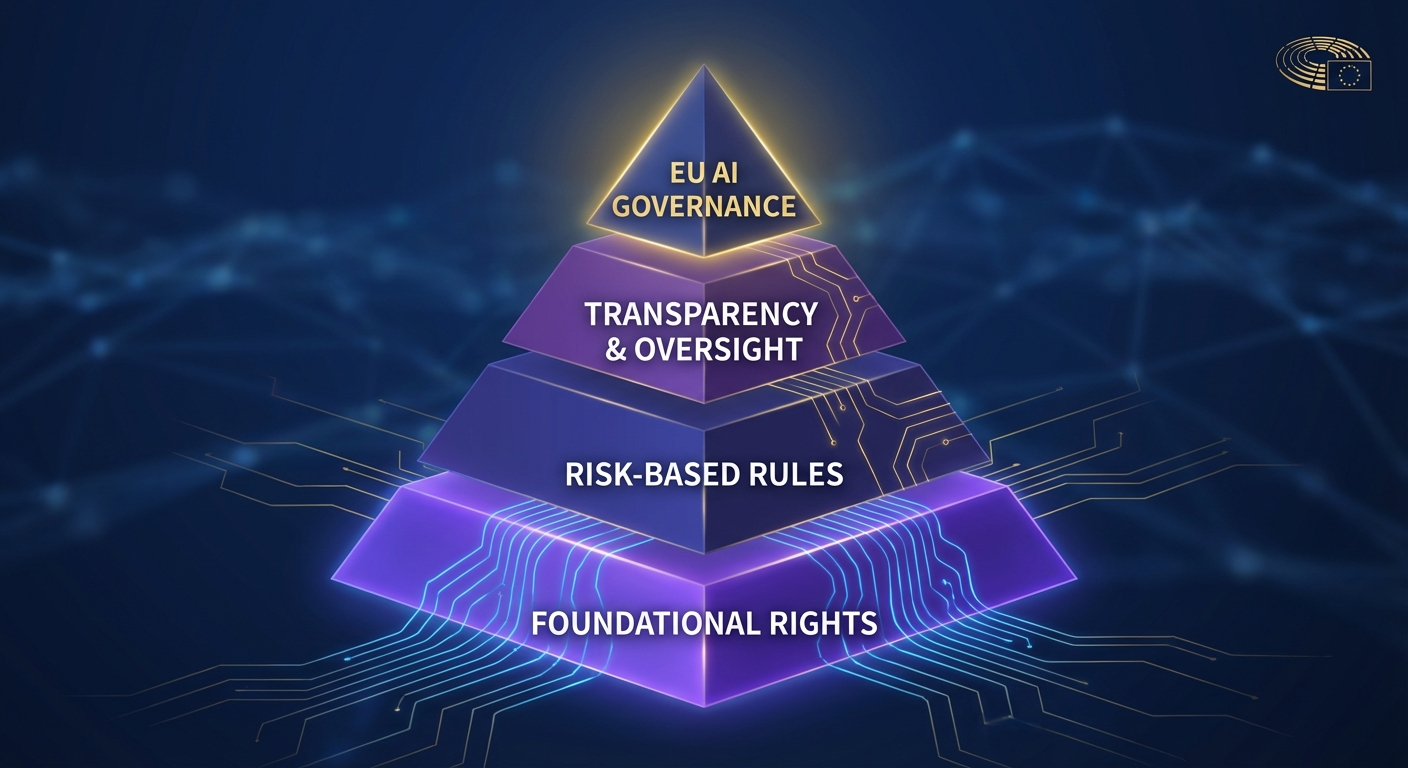

The Risk-Based Classification Framework

The cornerstone of the EU AI Act is its risk-based approach. Rather than regulating all AI equally, it classifies systems into four tiers based on their potential to harm health, safety, and fundamental rights. The higher the risk, the stricter the requirements.

| Risk Level | Regulatory Treatment | Examples |

|---|---|---|

| Unacceptable | Completely prohibited | Social scoring, subliminal manipulation, real-time biometric ID in public spaces |

| High Risk | Stringent requirements (risk management, documentation, conformity assessment, human oversight) | AI in hiring, credit scoring, medical devices, critical infrastructure, law enforcement |

| Limited Risk | Transparency obligations | Chatbots, deepfake generators, emotion recognition systems |

| Minimal Risk | No specific obligations (voluntary codes of conduct) | AI spam filters, video game NPCs, inventory management |

This framework means the vast majority of AI systems will fall into the minimal- or limited-risk categories and face light or no regulation. The Act’s weight falls squarely on prohibited practices and high-risk systems — which is where compliance efforts must concentrate.

Prohibited AI Practices: What’s Banned Under the EU AI Act

Since 2 February 2025, eight categories of AI practices have been outright banned across the EU. These prohibitions are operator-agnostic — they apply to anyone placing, servicing, or using such systems, regardless of their role in the supply chain.

The Eight Prohibited Practices

- Subliminal, manipulative, or deceptive techniques — AI systems that materially distort behavior by impairing informed decision-making, causing significant harm.

- Exploitation of vulnerabilities — Targeting individuals due to age, disability, or social/economic situation to distort behavior and cause harm.

- Social scoring — Classifying people based on social behavior or personal characteristics, leading to disproportionate treatment. Lawful credit scoring in financial services is explicitly permitted.

- Predicting criminality from profiling — Assessing criminal offence likelihood based solely on personality traits or profiling.

- Untargeted facial recognition database scraping — Building or expanding facial recognition databases through mass scraping of internet or CCTV images.

- Emotion inference in workplaces and education — Using biometric-based emotion recognition in employment or educational settings, unless for safety or medical reasons.

- Biometric categorization for sensitive attributes — Using biometric data to infer race, political opinions, religious beliefs, trade union membership, sex life, or sexual orientation.

- Real-time remote biometric identification in public spaces — For law enforcement, except in narrowly defined circumstances: searching for kidnapping/trafficking victims, preventing imminent threats to life, or identifying suspects of serious crimes.

Violations of these prohibitions carry the Act’s highest penalties: up to €35 million or 7% of global annual turnover, whichever is greater.

High-Risk AI Systems: Categories and EU AI Act Requirements

High-risk AI systems are the regulatory core of the EU AI Act. They face the most comprehensive set of obligations, from pre-market conformity assessments to ongoing post-market monitoring. Understanding whether your AI system qualifies — and what that requires — is the central compliance question for most organizations.

Two Classification Pathways

Category A (Annex I): AI systems used as safety components of products regulated under existing EU harmonization legislation — including machinery, toys, medical devices, lifts, pressure equipment, radio equipment, and vehicles.

Category B (Annex III): AI systems in eight specific high-risk use case areas:

- Biometrics — Remote identification, biometric categorization, emotion recognition

- Critical infrastructure — Safety components in digital infrastructure, transport, utilities

- Education & vocational training — Student selection, evaluation, assessment, proctoring

- Employment & HR — Recruitment screening, performance evaluation, promotion decisions, task allocation

- Essential services — Public assistance eligibility, creditworthiness assessment, insurance risk pricing, emergency dispatching, medical triage

- Law enforcement — Risk assessment, criminal profiling, evidence evaluation

- Migration & border control — Detecting individuals, risk assessment, visa/asylum applications

- Administration of justice & democracy — Judicial research assistance, election influence analysis

Exceptions to High-Risk Classification

Notably, an Annex III system is not automatically high-risk if it performs only:

- A narrow procedural task (e.g., converting unstructured data to structured format)

- An improvement to previously completed human work (e.g., refining document language)

- Pattern detection without replacing human judgment (e.g., flagging grading inconsistencies)

- A preparatory task for a relevant assessment (e.g., translating documents before review)

However, these exceptions cannot be invoked if the AI system performs profiling of natural persons.

Section 2 Requirements for High-Risk Systems (Articles 8–15)

Providers of high-risk AI systems must comply with a comprehensive set of technical and organizational requirements:

- Risk management system (Art. 9) — An ongoing, iterative process to identify, analyze, evaluate, and mitigate foreseeable risks throughout the system lifecycle

- Data governance (Art. 10) — Training, validation, and testing datasets must be high-quality, representative, error-free, and bias-mitigated

- Technical documentation (Art. 11) — Comprehensive documentation covering algorithms, data, training methodologies, testing, validation, and risk management measures

- Record keeping & logging (Art. 12) — Automatic usage logs for traceability, retained for at least 6 months

- Transparency & information (Art. 13) — Clear, comprehensive instructions enabling deployers to understand and properly use the system

- Human oversight (Art. 14) — Built-in measures allowing humans to effectively supervise, intervene in, or override the system

- Accuracy, robustness & cybersecurity (Art. 15) — Performance levels appropriate to the system’s intended purpose, resilient to errors and adversarial attacks

This mirrors the governance rigor increasingly seen across AI applications — similar to how transformer architectures powering modern AI require robust engineering practices to function reliably at scale.

Who Must Comply: AI Act Obligations by Operator Role

The EU AI Act doesn’t assign a single compliance burden — it distributes obligations across six operator categories, each with distinct responsibilities. Understanding your role in the AI value chain is the first step to determining your obligations.

Providers (Heaviest Obligations)

Providers — those who develop or commission an AI system and place it on the market under their own name — bear the most extensive duties:

- Ensure compliance with all Section 2 requirements throughout the lifecycle

- Implement a quality management system (Art. 17)

- Conduct conformity assessments before market placement (Art. 43)

- Draw up an EU Declaration of Conformity and affix CE marking

- Register the system in the EU database

- Maintain automatic logs for ≥6 months

- Take corrective actions for non-conformity and notify authorities of risks

Deployers

Organizations using AI systems under their authority (but not developing them) must:

- Use the system in accordance with instructions

- Ensure human oversight by competent, trained personnel

- Monitor system operation and report malfunctions to providers

- Conduct a fundamental rights impact assessment (for public bodies and certain private entities)

- Inform natural persons that they are subject to a high-risk AI system

Importers and Distributors

Importers must verify the provider has completed conformity assessment, CE marking, and documentation before placing the system on the EU market. Distributors must verify CE marking and ensure proper storage/transport conditions.

The “Becoming a Provider” Trigger

Critically, you become the provider of an existing AI system if you:

- Put your name or trademark on it

- Make a substantial modification

- Change its intended purpose so it becomes high-risk

This means fine-tuning a third-party model and deploying it under your brand could trigger full provider obligations — a significant consideration for organizations building on foundation models.

General-Purpose AI Models: Rules for Foundation Models Under the EU AI Act

Recognizing the unique nature and influence of large foundation models — from GPT-4 to Gemini to open-source alternatives — the EU AI Act introduces dedicated provisions for General-Purpose AI (GPAI) models. These apply since 2 August 2025.

What Qualifies as a GPAI Model?

A GPAI model displays “significant generality” and is capable of performing a wide range of distinct tasks. Key indicators include: at least one billion parameters, trained with large-scale self-supervised data. The Commission’s GPAI Guidelines (July 2025) set indicative thresholds at 10²³ FLOPs of training compute and specific output modalities (text, image, video, audio).

Standard GPAI Obligations

All GPAI model providers must:

- Prepare and maintain technical documentation including training and testing details

- Provide information and documentation to downstream AI system providers

- Establish a copyright compliance policy and respect EU copyright law (including opt-out mechanisms)

- Publish a sufficiently detailed summary of training data content

Systemic Risk: Stricter Rules for the Most Powerful Models

GPAI models posing systemic risk — presumed when training compute exceeds 10²⁵ FLOPs, or designated by the Commission based on capabilities — face additional obligations:

- Perform and document model evaluations including adversarial testing

- Assess and mitigate systemic risks (including risks from model interaction or cascading failures)

- Maintain adequate cybersecurity protections

- Report serious incidents to the AI Office without undue delay

- Ensure a level of energy consumption documentation

The AI Office’s codes of practice, expected in draft by early 2026, will further specify these obligations. Understanding the architecture behind these models — from transformers to mixture-of-experts — helps contextualize why regulators treat them differently.

EU AI Act Implementation Timeline: Key Compliance Deadlines

The Act follows a staggered implementation, giving organizations time to adapt — but several critical deadlines have already passed or are imminent:

| Date | Milestone | Status |

|---|---|---|

| 1 Aug 2024 | Entry into force. General provisions and definitions apply. | ✅ Done |

| 2 Feb 2025 | Prohibited practices & AI literacy obligations enforceable. | ✅ Done |

| 2 Aug 2025 | GPAI model rules. EU governance bodies operational. | ✅ Done |

| 2 Aug 2026 | Full obligations for Annex III high-risk AI systems. Transparency obligations. Deployer duties begin. | ⚠️ 5 months away |

| 2 Aug 2027 | High-risk AI in regulated products (medical devices, machinery, vehicles) must comply. | ⏳ Upcoming |

| 31 Dec 2030 | AI components in large-scale IT systems (Annex X) must be brought into compliance. | ⏳ Upcoming |

Important: In November 2025, the Commission published a draft Digital Omnibus Regulation proposing targeted amendments — including extending certain deadlines and easing compliance costs for SMEs. As of March 2026, this remains in draft form. Organizations should plan for the current statutory deadlines while monitoring Omnibus developments.

Penalties, Enforcement, and Governance Under the EU AI Act

The EU AI Act establishes a robust enforcement framework with tiered penalties calibrated to the severity of the infringement:

| Violation Type | Maximum Fine |

|---|---|

| Prohibited AI practices (Article 5) | €35 million or 7% of global annual turnover |

| High-risk AI system non-compliance & GPAI obligations | €15 million or 3% of global annual turnover |

| Providing incorrect/misleading information to authorities | €7.5 million or 1% of global annual turnover |

For SMEs and startups, proportionate caps apply — ensuring penalties are dissuasive but not existence-threatening for smaller players.

Governance Architecture

Enforcement is split between national and EU-level bodies:

- National market surveillance authorities — Enforce the Act within each member state, conducting investigations, inspections, and imposing penalties

- The AI Office (within the European Commission) — Oversees GPAI model compliance, coordinates cross-border enforcement, develops codes of practice and guidelines

- The AI Board — Advisory body of member state representatives ensuring consistent application

- Scientific Panel — Independent experts advising on systemic risk, model evaluation methodologies, and emerging threats

Individual Rights

The Act also empowers individuals with two important rights:

- Right to explanation (Art. 86) — Affected persons can demand clear, meaningful explanations of decisions made by high-risk AI systems that have legal or similarly significant effects on them

- Right to complain (Art. 85) — Anyone may file complaints with market surveillance authorities about suspected infringements

Your EU AI Act Compliance Roadmap: Practical Steps

With the August 2026 deadline for high-risk AI systems rapidly approaching, organizations need to move from understanding to action. Here is a practical compliance roadmap:

Step 1: AI System Inventory & Classification

Map every AI system within your organization. For each, determine:

- Does it meet the Act’s definition of an “AI system” (does it infer)?

- What risk category does it fall into?

- What is your operator role (provider, deployer, importer)?

- Does any exception to high-risk classification apply?

Step 2: Gap Analysis Against Requirements

For each high-risk system, assess current compliance against Articles 8–15 requirements. Key gaps typically emerge in:

- Formal risk management documentation

- Training data governance and bias testing

- Human oversight mechanisms

- Technical documentation completeness

Step 3: Implement Governance Structures

- Appoint an AI compliance officer or assign responsibility within existing governance

- Build or adapt your quality management system

- Establish AI literacy training for all personnel involved with AI systems

- Create processes for fundamental rights impact assessments (required for deployers of certain high-risk systems)

Step 4: Technical Compliance

- Implement logging and monitoring infrastructure

- Ensure human-in-the-loop or human-on-the-loop oversight mechanisms

- Prepare for conformity assessment (self-assessment for most systems; third-party for biometric identification and critical infrastructure)

- Prepare EU Declaration of Conformity and CE marking processes

Step 5: Register & Monitor

- Register high-risk AI systems in the EU database

- Establish post-market monitoring processes

- Set up serious incident reporting procedures

- Monitor codes of practice, harmonized standards, and Commission guidelines as they evolve

Tools that help manage complex AI workflows — like interactive documentation libraries — can serve as building blocks for the transparency and information requirements the Act demands. Similarly, leveraging AI-powered presentation tools can help organizations effectively communicate compliance strategies to stakeholders and boards.

Regulatory sandboxes: The AI Act requires each member state to establish at least one AI regulatory sandbox by 2 August 2026. These provide controlled environments where organizations can develop, train, and test AI systems before market placement — with regulatory guidance and reduced compliance friction. SMEs and startups receive priority access.

Frequently Asked Questions

What is the EU AI Act and when does it take effect?

The EU AI Act (Regulation EU 2024/1689) is the world’s first comprehensive AI regulation. It entered into force on 1 August 2024, with provisions phasing in through 2027. Prohibited AI practices applied from 2 February 2025, and key obligations for high-risk systems apply from 2 August 2026.

What AI practices are banned under the EU AI Act?

Eight AI practices are outright prohibited: social scoring, subliminal manipulation, exploitation of vulnerabilities, predicting criminality from profiling, untargeted facial recognition scraping, emotion inference in workplaces/education, biometric categorization for sensitive attributes, and real-time remote biometric identification in public spaces (with narrow exceptions for law enforcement).

Does the EU AI Act apply to companies outside the EU?

Yes. Like the GDPR, the EU AI Act has broad extraterritorial reach. It applies to any provider placing an AI system on the EU market, any deployer located in the EU, non-EU providers whose AI outputs are used within the EU, and GPAI model providers placing models on the EU market from anywhere in the world.

What are the penalties for violating the EU AI Act?

Penalties reach up to €35 million or 7% of worldwide annual turnover for the most serious violations (prohibited practices). Other violations carry penalties of €15 million or 3% of turnover. Supplying incorrect information can result in fines of €7.5 million or 1% of turnover.

What is a high-risk AI system under the EU AI Act?

High-risk AI systems are classified through two pathways: AI used as safety components in products under existing EU legislation (machinery, medical devices, vehicles), and AI in eight specific use areas including biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and democratic processes. These systems face rigorous requirements for risk management, data governance, human oversight, and documentation.

Turn complex documents into interactive experiences

Upload any document. Libertify transforms it into a self-explaining experience.