EU AI Act Regulation Explained: Risk Categories, Penalties & 2026 Timeline

Table of Contents

- What Is the EU AI Act (Regulation 2024/1689)?

- The Four-Tier Risk Classification Framework

- Prohibited AI Practices Under Article 5

- High-Risk AI Systems: Obligations and Requirements

- General-Purpose AI Models and Systemic Risk

- Transparency Obligations for Limited-Risk AI

- EU AI Act Enforcement Timeline: Key Dates Through 2027

- EU AI Act Penalties: Fines Up to 7% of Global Revenue

- Governance Structure and the EU AI Office

- Global Impact: How the EU AI Act Sets a Worldwide Standard

📌 Key Takeaways

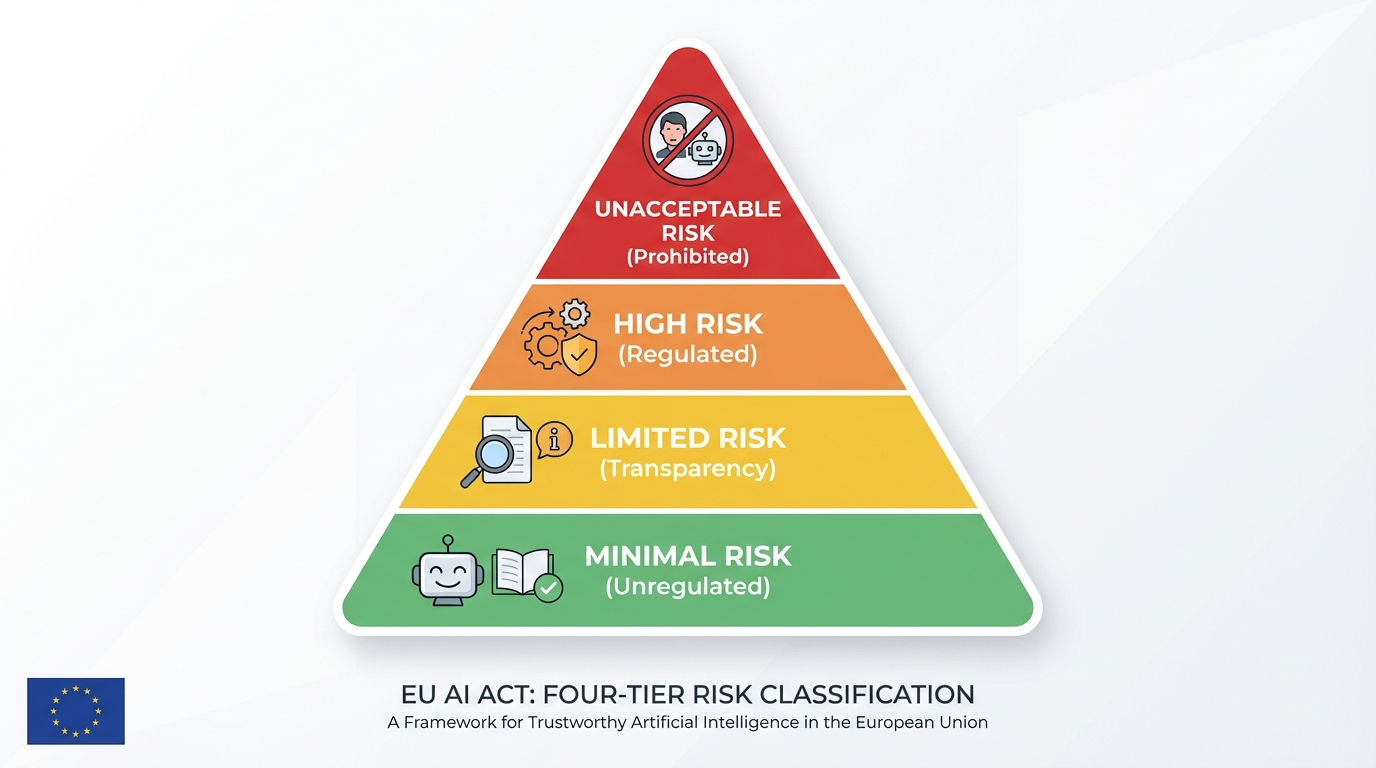

- Risk-based framework: The EU AI Act classifies AI systems into four tiers — unacceptable, high, limited, and minimal risk — with obligations scaled accordingly.

- Eight prohibited practices: Social scoring, subliminal manipulation, untargeted facial scraping, and five other AI applications are completely banned since February 2025.

- Massive penalties: Fines reach up to €35 million or 7% of global annual turnover for the most serious violations — exceeding GDPR’s maximum penalties.

- August 2026 deadline: High-risk AI system obligations become enforceable on August 2, 2026, making compliance planning urgent for organizations deploying AI in the EU.

- Extraterritorial reach: Like GDPR, the regulation applies to any company whose AI systems operate in or affect the EU market, regardless of where the company is headquartered.

What Is the EU AI Act (Regulation 2024/1689)?

The EU AI Act regulation — officially Regulation (EU) 2024/1689 of the European Parliament and of the Council — is the world’s first comprehensive legal framework governing artificial intelligence. Adopted on June 13, 2024, and entering into force on August 1, 2024, this landmark legislation establishes harmonized rules for the development, deployment, and use of AI systems across all 27 EU member states.

Unlike voluntary codes of conduct or sector-specific guidelines, the EU AI Act is a directly applicable regulation — meaning it does not require national transposition and creates binding obligations for providers, deployers, importers, and distributors of AI systems. The regulation spans 113 articles and 13 annexes, making it one of the most detailed pieces of technology legislation ever enacted.

The Act’s stated objectives are threefold: to protect fundamental rights, health, and safety; to create legal certainty for AI investment and innovation across the single market; and to enhance governance and enforcement of existing EU law on fundamental rights. It achieves this through a risk-based classification system that assigns different levels of regulatory obligation based on the potential harm an AI system can cause.

For organizations building or deploying AI — whether in Europe or targeting the European market — the EU AI Act regulation represents a paradigm shift. It moves AI governance from the realm of self-regulation into binding law, with enforcement powers and penalties that rival (and in some cases exceed) those of the General Data Protection Regulation (GDPR).

The Four-Tier EU AI Act Risk Classification Framework

The cornerstone of the EU AI Act regulation is its risk-based approach to AI classification. Rather than regulating all AI systems identically, the Act creates a tiered framework where obligations are proportional to the level of risk an AI system poses to health, safety, and fundamental rights. This structure draws on decades of EU product safety regulation while adapting the principles for the unique challenges of artificial intelligence.

Unacceptable Risk (Prohibited)

At the top of the pyramid, certain AI applications are deemed so dangerous that they are completely banned. These prohibited AI practices, defined in Article 5, include social scoring, subliminal manipulation, and certain forms of biometric surveillance. The prohibition became enforceable on February 2, 2025 — just six months after the Act entered into force — reflecting the urgency the EU places on eliminating these practices.

High Risk (Regulated)

The largest portion of the regulation addresses high-risk AI systems. These are AI systems used in areas where failures or biases could cause significant harm — such as critical infrastructure, employment decisions, credit scoring, law enforcement, and education. Providers of high-risk systems must meet extensive requirements for risk management, data quality, documentation, transparency, human oversight, accuracy, and cybersecurity.

Limited Risk (Transparency Obligations)

AI systems that interact directly with humans — such as chatbots, emotion recognition systems, and deepfake generators — are subject to specific transparency obligations. Users must be informed that they are interacting with AI, and AI-generated or manipulated content must be labeled accordingly. These obligations are lighter than high-risk requirements but still carry enforcement consequences.

Minimal Risk (Unregulated)

The vast majority of AI systems currently on the market — including spam filters, AI-powered video games, and recommendation algorithms — fall into the minimal risk category and are not subject to specific obligations under the Act. Providers are encouraged to voluntarily adopt codes of conduct, but this is not mandatory. As noted in the Stanford AI Index 2025, this category encompasses the overwhelming majority of commercial AI applications.

Prohibited AI Practices Under Article 5

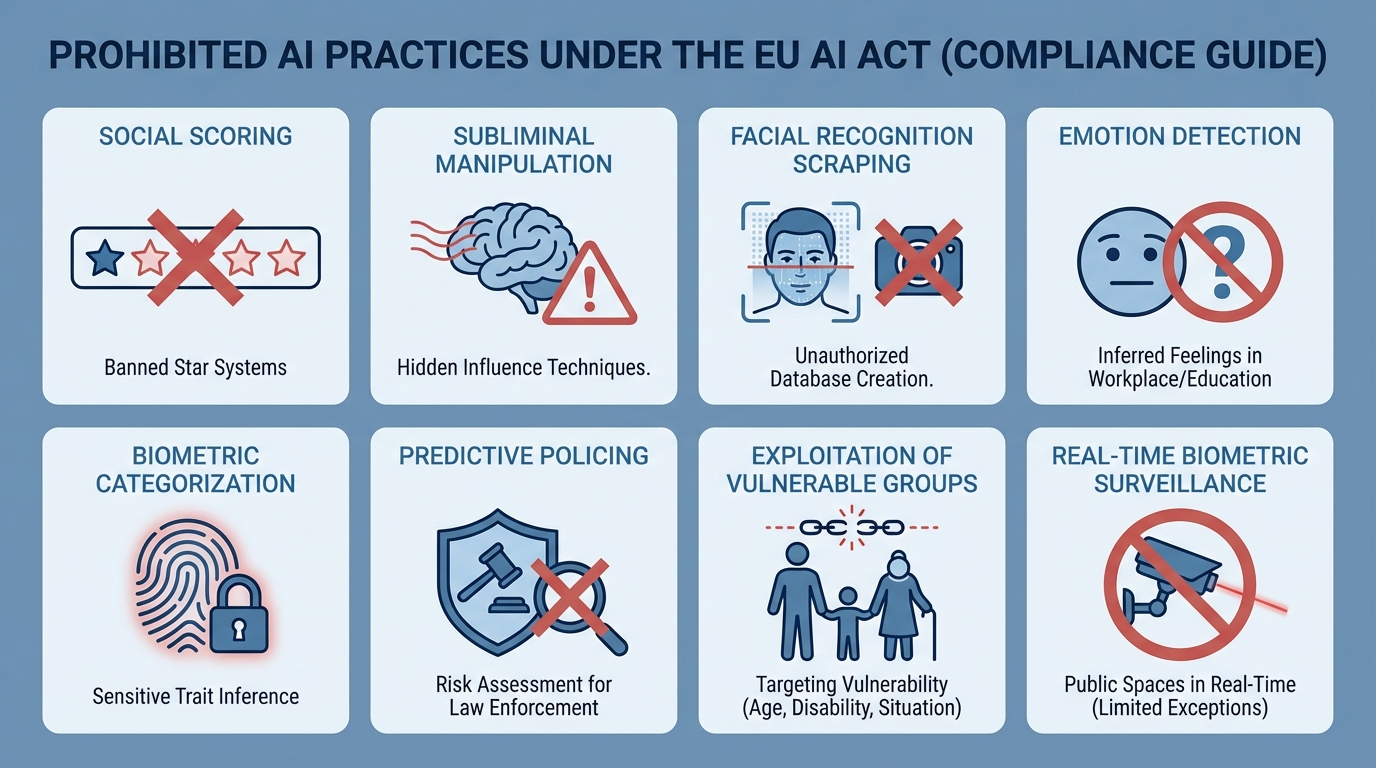

Article 5 of the EU AI Act regulation establishes eight categories of AI practices that are completely banned within the European Union. These prohibitions reflect the EU’s determination to draw clear red lines around AI applications that fundamentally conflict with European values and fundamental rights.

The eight prohibited categories are:

- Subliminal manipulation: AI systems deploying subliminal, manipulative, or deceptive techniques that distort behavior and impair informed decision-making, causing significant harm.

- Exploitation of vulnerabilities: AI that exploits vulnerabilities related to age, disability, or socio-economic circumstances to materially distort behavior, causing significant harm.

- Social scoring: AI systems that evaluate or classify individuals based on social behavior or personal traits, leading to detrimental or unfavorable treatment unrelated to the original context.

- Predictive policing by profiling: AI assessing an individual’s risk of committing criminal offenses solely based on profiling or personality traits, except when augmenting human assessments based on objective, verifiable facts.

- Untargeted facial recognition scraping: Compiling facial recognition databases through untargeted scraping of facial images from the internet or CCTV footage.

- Workplace and school emotion inference: Inferring emotions in workplaces or educational institutions, except for medical or safety reasons.

- Biometric categorization of sensitive attributes: Systems that categorize individuals based on biometric data to infer race, political opinions, trade union membership, religious beliefs, sex life, or sexual orientation.

- Real-time remote biometric identification: Use of real-time remote biometric identification (RBI) in publicly accessible spaces for law enforcement, with narrow exceptions for searching missing persons, preventing imminent threats, and identifying suspects in serious crimes.

These prohibitions became enforceable on February 2, 2025. The European Commission published detailed guidelines on the interpretation of these prohibitions to assist organizations in compliance assessments.

Understanding complex regulatory documents is easier when they’re interactive. Transform any regulation into an engaging experience.

High-Risk AI Systems: Obligations and Requirements

The regulatory core of the EU AI Act regulation centers on high-risk AI systems, which face the most extensive compliance obligations. Article 6 establishes the classification rules, while Articles 8 through 27 detail the specific requirements that providers, deployers, importers, and distributors must meet.

How AI Systems Are Classified as High-Risk

An AI system is classified as high-risk through two pathways. First, under Article 6(1), AI systems used as safety components of products — or that are themselves products — covered by the EU harmonization legislation listed in Annex I and that require third-party conformity assessment. This includes AI in medical devices, automotive systems, machinery, toys, lifts, aviation equipment, and railway systems.

Second, under Article 6(2), AI systems deployed in eight specific use-case domains listed in Annex III:

- Biometrics: Remote biometric identification (not real-time in public for law enforcement, which is prohibited), biometric categorization, and emotion recognition.

- Critical infrastructure: AI managing road traffic, water, gas, heating, or electricity supply.

- Education and vocational training: Systems determining access to education, evaluating learning outcomes, or monitoring behavior during tests.

- Employment and worker management: AI used in recruitment, screening, hiring decisions, task allocation, performance monitoring, or termination decisions.

- Access to essential services: Credit scoring, insurance risk assessment, emergency service dispatch, and public benefit eligibility determination.

- Law enforcement: Polygraphs, evidence reliability assessment, crime analytics, and profiling in criminal investigations.

- Migration, asylum, and border control: Risk assessment tools, document authenticity verification, and visa application processing.

- Administration of justice: AI used for researching and interpreting legal facts or applying the law to facts.

Mandatory Requirements for High-Risk AI Providers

Providers of high-risk AI systems must implement comprehensive compliance measures, as detailed in the EU AI Act Compliance Guide:

- Risk Management System (Article 9): A continuous, iterative process throughout the AI system lifecycle, including risk identification, estimation, evaluation, and mitigation.

- Data Governance (Article 10): Training, validation, and testing datasets must meet quality criteria including relevance, representativeness, accuracy, and completeness.

- Technical Documentation (Article 11): Comprehensive documentation demonstrating compliance, prepared before market placement and kept up to date.

- Record-Keeping (Article 12): Automatic logging of events throughout the system’s lifetime to ensure traceability.

- Transparency (Article 13): Systems must be sufficiently transparent for deployers to interpret outputs and use them appropriately.

- Human Oversight (Article 14): Systems must be designed to allow effective oversight by natural persons during use.

- Accuracy, Robustness, and Cybersecurity (Article 15): Systems must achieve appropriate levels of accuracy and be resilient to errors, faults, and attacks.

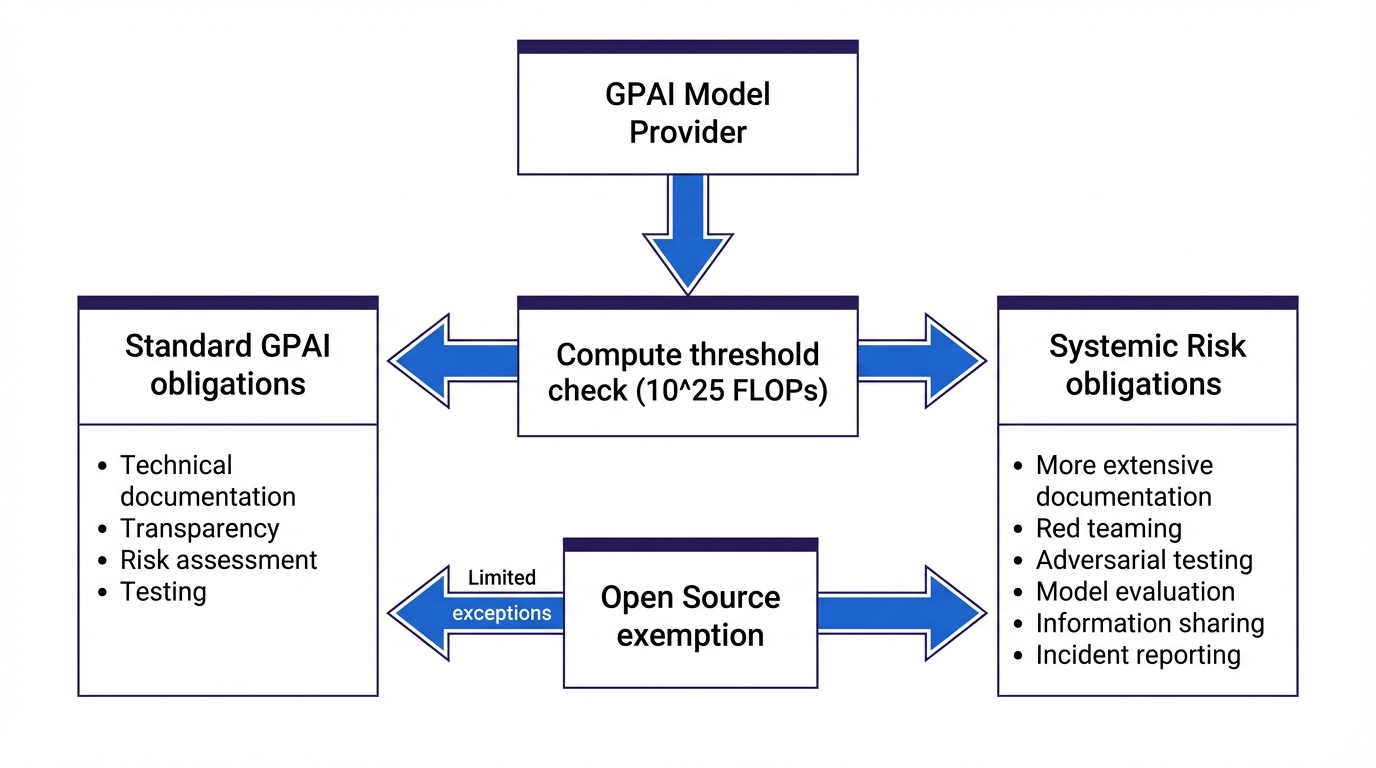

General-Purpose AI Models and Systemic Risk

One of the most debated provisions of the EU AI Act regulation addresses general-purpose AI (GPAI) models — foundation models capable of performing a wide range of tasks. This includes large language models like GPT-4, Claude, and Gemini, as well as other multimodal systems. The rules for GPAI providers applied from August 2, 2025.

Obligations for All GPAI Model Providers

Every provider of a GPAI model must, regardless of the model’s risk level:

- Prepare and maintain detailed technical documentation of the model and its training process.

- Provide clear information and documentation to downstream providers integrating the model into their AI systems.

- Comply with the EU’s Copyright Directive, including respecting opt-out mechanisms for text and data mining.

- Publish a sufficiently detailed summary of training data content, following a template provided by the AI Office.

The Act includes a notable carve-out for free and open-source GPAI models: they need only comply with the copyright and training data summary requirements, unless they present a systemic risk. This reflects the EU’s intention to encourage open AI development, a trend documented in the State of AI 2025 McKinsey Report.

Systemic Risk: Additional Obligations

GPAI models that pose systemic risk — initially defined as models trained with total compute exceeding 10²⁵ FLOPs — face additional requirements:

- Conduct and document model evaluations, including adversarial testing.

- Assess and mitigate systemic risks, including through red-teaming exercises.

- Track, document, and report serious incidents to the AI Office and relevant national authorities.

- Ensure adequate cybersecurity protections for the model and its physical infrastructure.

Stay ahead of AI regulation. Explore how Libertify turns complex policy documents into interactive knowledge experiences.

Transparency Obligations for Limited-Risk AI

Beyond high-risk systems, the EU AI Act regulation imposes specific transparency requirements on certain AI systems regardless of their risk classification. Article 50 establishes these obligations to ensure that individuals can make informed decisions about their interactions with AI.

Providers of AI systems intended to interact directly with people — such as chatbots, virtual assistants, and AI-generated voice services — must ensure users are informed they are interacting with an AI system. This obligation does not apply where it is obvious from the circumstances and context of use.

For deepfakes and synthetic content, the Act requires clear labeling. AI-generated or manipulated images, audio, or video content (“deepfakes”) must be disclosed as artificially generated. This includes AI-generated text published to inform the public on matters of public interest, which must be labeled as AI-generated.

Deployers of emotion recognition or biometric categorization systems must inform the persons exposed to the system and process personal data in accordance with GDPR, the Law Enforcement Directive, and the Regulation on border management.

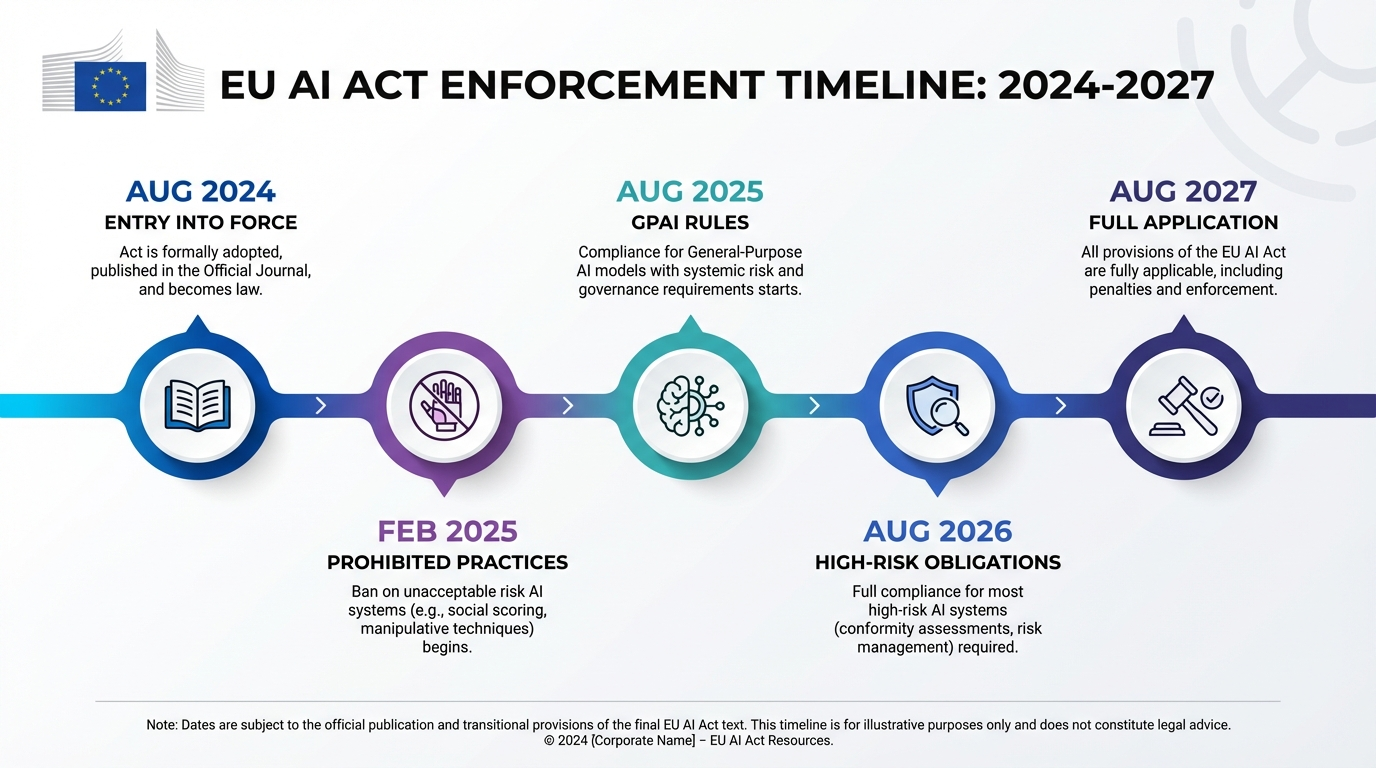

EU AI Act Enforcement Timeline: Key Dates Through 2027

The EU AI Act regulation follows a phased enforcement timeline designed to give organizations time to prepare while prioritizing the most urgent prohibitions. Understanding these dates is critical for compliance planning, as the regulation’s window of opportunity is narrowing rapidly.

| Date | Milestone | Key Articles |

|---|---|---|

| August 1, 2024 | EU AI Act enters into force | All |

| February 2, 2025 | Prohibited AI practices and AI literacy obligations apply | Art. 5, Art. 4 |

| August 2, 2025 | GPAI model obligations and governance rules apply | Art. 51–56, Chapter VII |

| February 2, 2026 | Commission guidelines on high-risk classification published | Art. 6(5), Art. 96 |

| August 2, 2026 | High-risk AI systems obligations apply (Annex III); penalty provisions for GPAI | Art. 6(2), Art. 8–27, Art. 99 |

| August 2, 2027 | Full application — including high-risk AI in Annex I products | Art. 6(1), All remaining |

For organizations deploying high-risk AI in the EU, the August 2, 2026 deadline is the most consequential near-term milestone. As noted by the European Commission’s digital strategy division, national authorities must have their enforcement infrastructure in place by this date.

EU AI Act Penalties: Fines Up to 7% of Global Revenue

The EU AI Act penalties are structured in three tiers, designed to be effective, proportionate, and dissuasive. Article 99 establishes the maximum fine levels, which represent some of the highest penalties in technology regulation worldwide.

Tier 1: Prohibited Practices (Highest Penalties)

Non-compliance with the prohibited AI practices in Article 5 attracts the most severe sanctions: administrative fines of up to €35 million or, for undertakings, up to 7% of total worldwide annual turnover for the preceding financial year, whichever is higher. For context, 7% of Google’s 2024 revenue would exceed $21 billion.

Tier 2: High-Risk Obligations (Mid-Level Penalties)

Non-compliance with obligations related to high-risk AI systems, GPAI models, and notified bodies attracts fines of up to €15 million or up to 3% of global annual turnover, whichever is higher. This covers failures in risk management, data governance, transparency, human oversight, and conformity assessment requirements.

Tier 3: Incorrect Information (Lower Penalties)

Supplying incorrect, incomplete, or misleading information to national authorities or notified bodies attracts fines of up to €7.5 million or 1.5% of turnover, whichever is higher.

SME and Startup Protections

Importantly, the EU AI Act regulation includes proportionality safeguards for small and medium-sized enterprises. For SMEs (including startups), the penalty amounts referenced above serve as upper limits, and national authorities must take into account the company’s size and economic viability when determining fine amounts. The regulation also instructs the Commission to provide guidelines on penalty implementation that account for SME interests.

Make regulatory intelligence accessible to your entire team. Turn dense legal documents into interactive experiences anyone can understand.

Governance Structure and the EU AI Office

The EU AI Act regulation establishes a multi-layered governance architecture to oversee implementation and enforcement. At the EU level, the European AI Office — established within the European Commission — serves as the central coordinating body. It is responsible for overseeing GPAI model compliance, developing codes of practice, and providing guidance on the regulation’s interpretation.

The governance structure includes:

- European AI Board: Composed of representatives from each member state’s national supervisory authority, plus the European Data Protection Supervisor. The Board advises and assists the Commission on consistent application of the regulation.

- National Competent Authorities: Each member state must designate at least one national supervisory authority to oversee the regulation’s application at the national level, including market surveillance and enforcement.

- Advisory Forum: A balanced stakeholder group representing industry, civil society, academia, and other relevant interests to provide technical expertise to the AI Board and Commission.

- Scientific Panel: A group of independent experts supporting enforcement activities, particularly regarding GPAI models and systemic risk assessments.

This governance model mirrors the successful structure of multi-stakeholder frameworks the EU has used in other regulatory domains, though the scale and technical complexity of AI oversight will test its capacity.

Global Impact: How the EU AI Act Sets a Worldwide Standard

The EU AI Act regulation is widely expected to trigger a “Brussels Effect” — a phenomenon where EU regulations become the de facto global standard because multinational companies find it more efficient to comply with the strictest rules everywhere rather than maintain different systems for different jurisdictions.

This pattern already played out with GDPR, which inspired data protection laws in Brazil, Japan, South Korea, and dozens of other countries. The AI Act appears poised for a similar trajectory. As documented in the DeepSeek-R1 analysis, even AI companies developing models outside Europe must now consider EU AI Act compliance if their models could be deployed in the European market.

Several jurisdictions are already developing AI governance frameworks influenced by the EU approach:

- United States: While taking a more sector-specific approach, the Biden Executive Order on AI Safety (October 2023) and subsequent state-level AI bills share conceptual overlap with the risk-based framework.

- United Kingdom: The UK’s pro-innovation approach differs from the EU’s regulatory model but is increasingly converging on similar themes around high-risk AI oversight.

- Canada: The Artificial Intelligence and Data Act (AIDA) draws explicitly on the EU AI Act’s risk classification approach.

- China: China’s AI regulations — covering deepfakes, recommendation algorithms, and generative AI — address some of the same concerns through different regulatory mechanisms.

For global technology companies, the EU AI Act regulation effectively sets a compliance floor. Companies that meet its requirements will likely satisfy most other jurisdictions’ emerging AI rules. Those that ignore it risk being locked out of the world’s third-largest economy — and the 450 million consumers who depend on it.

Frequently Asked Questions

When does the EU AI Act fully apply?

The EU AI Act entered into force on August 1, 2024. Prohibited AI practices and AI literacy obligations applied from February 2, 2025. GPAI model rules apply from August 2, 2025. High-risk AI system obligations apply from August 2, 2026, with certain biometric systems rules extending to August 2, 2027. Full application across all provisions is August 2, 2027.

What are the penalty tiers under the EU AI Act?

The EU AI Act establishes three penalty tiers: up to €35 million or 7% of global annual turnover for prohibited AI practices; up to €15 million or 3% of turnover for non-compliance with high-risk AI obligations; and up to €7.5 million or 1.5% of turnover for supplying incorrect information to authorities. SMEs and startups benefit from proportional caps.

What AI practices are banned under the EU AI Act?

The EU AI Act prohibits eight categories of AI practices: subliminal manipulation techniques, exploitation of vulnerable groups, social scoring by governments, predictive policing based solely on profiling, untargeted facial recognition database scraping, emotion inference in workplaces and schools, biometric categorization inferring sensitive attributes, and real-time remote biometric identification in public spaces (with narrow law enforcement exceptions).

How does the EU AI Act classify high-risk AI systems?

High-risk AI systems fall into two categories under Article 6: (1) AI used as safety components of products covered by EU harmonization legislation in Annex I that require third-party conformity assessment, and (2) AI systems in eight specific use-case areas listed in Annex III, including biometrics, critical infrastructure, education, employment, essential services, law enforcement, migration, and justice. These systems must meet strict requirements for risk management, data governance, transparency, human oversight, and accuracy.

Does the EU AI Act apply to companies outside Europe?

Yes. The EU AI Act has extraterritorial reach. It applies to any provider placing AI systems on the EU market or putting them into service in the EU, regardless of where the provider is established. It also applies to deployers located within the EU and to providers or deployers in third countries where the AI system output is used in the EU. This makes it a global benchmark similar to GDPR.